Etkileşimli Tuval, Google Asistan üzerinde geliştirilen ve geliştiricilerin Görüşme İşlemlerine görsel ve etkileyici deneyimler eklemesine olanak tanıyan bir çerçevedir. Bu görsel deneyim, Asistan'ın görüşmede kullanıcıya yanıt olarak gönderdiği etkileşimli bir web uygulamasıdır. Bir Asistan görüşmesinde satır içi olarak bulunan zengin yanıtların aksine Etkileşimli Tuval web uygulaması, tam ekran web görünümü olarak oluşturulur.

İşleminizde aşağıdakilerden birini yapmak istiyorsanız Etkileşimli Tuval'i kullanın:

- Tam ekran görseller oluşturma

- Özel animasyonlar ve geçişler oluşturma

- Veri görselleştirme işlemi yapın

- Özel düzenler ve GUI'ler oluşturun

Desteklenen cihaz sayısı

Etkileşimli Tuval şu anda aşağıdaki cihazlarda kullanılabilir:

- Akıllı ekranlar

- Android mobil cihazlar

İşleyiş şekli

Etkileşimli Tuval kullanan bir İşlem iki ana bileşenden oluşur:

- Konuşma İşlemi: Kullanıcı isteklerini gerçekleştirmek için bir konuşma arayüzü kullanan işlem. Görüşmenizi oluşturmak için Actions Builder veya Actions SDK'yı kullanabilirsiniz.

- Web uygulaması: İşleminizin kullanıcılara görüşme sırasında yanıt olarak gönderdiği, özelleştirilmiş görsellere sahip bir kullanıcı arabirimi web uygulaması. Web uygulamasını HTML, JavaScript ve CSS gibi web teknolojileriyle oluşturursunuz.

Etkileşimli bir Tuval İşlemi ile etkileşimde bulunan kullanıcılar, hedeflerine ulaşmak için Google Asistan'la karşılıklı iletişim kurar. Ancak Etkileşimli Tuval için bu görüşmenin büyük kısmı web uygulamanızın bağlamında gerçekleşir. Görüşme İşleminizi web uygulamanıza bağlarken web uygulaması kodunuza Interactive Canvas API'yi eklemeniz gerekir.

- Etkileşimli Tuval kitaplığı: Bir API kullanarak web uygulaması ile Görüşme İşleminiz arasında iletişim sağlamak için web uygulamasına eklediğiniz bir JavaScript kitaplığı. Daha fazla bilgi için Interactive Canvas API dokümanlarına bakın.

Etkileşimli web kitaplığını eklemenin yanı sıra, web uygulamanızı kullanıcının cihazında açmak için görüşmenizde Canvas yanıt türünü döndürmeniz gerekir. Ayrıca, web uygulamanızı kullanıcının girişine göre güncellemek için bir Canvas yanıtı da kullanabilirsiniz.

Canvas: Web uygulamasının URL'sini ve iletilecek verileri içeren bir yanıt. Actions Builder, web uygulamasını güncellemek içinCanvasyanıtını eşleşen amaç ve mevcut sahne verileriyle otomatik olarak doldurabilir. Alternatif olarak Node.js istek karşılama kitaplığını kullanarak bir webhook'tanCanvasyanıtı gönderebilirsiniz. Daha fazla bilgi için Canvas istemleri bölümüne bakın.

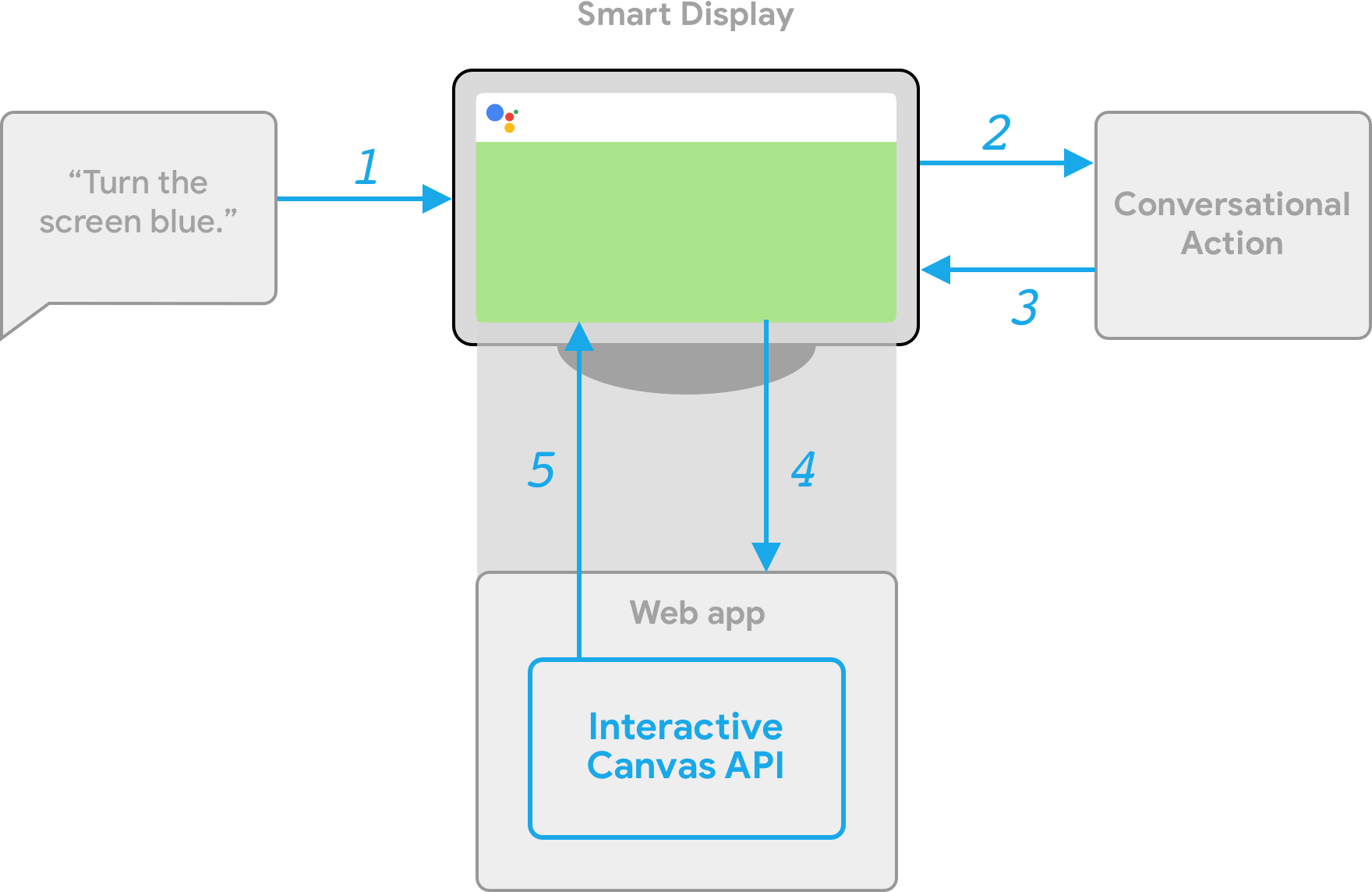

Etkileşimli Tuval'in işleyiş şeklini göstermek için Havalı Renkler adlı ve cihazın ekran rengini, kullanıcının belirttiği bir renge dönüştüren varsayımsal bir İşlem düşünün. Kullanıcı Action'ı çağırdıktan sonra aşağıdaki akış gerçekleşir:

- Kullanıcı, Asistan cihazına "Ekranı mavi yap" ifadesini gösterir.

- Actions on Google platformu, kullanıcının isteğini bir niyetle eşleşecek şekilde sohbet mantığınıza yönlendirir.

- Platform, niyeti İşlemin sahnesiyle eşleştirir. Böylece etkinlik tetiklenir ve cihaza

Canvasyanıtı gönderilir. Cihaz, yanıtta sağlanan bir URL'yi (henüz yüklenmediyse) kullanarak bir web uygulaması yükler. - Web uygulaması yüklendiğinde, geri çağırmalar Interactive Canvas API'yle kaydedilir.

Tuval yanıtı bir

dataalanı içeriyorsadataalanının nesne değeri, web uygulamasının kayıtlıonUpdategeri çağırmasına iletilir. Bu örnekte, konuşma mantığı,bluedeğerine sahip bir değişken içeren veri alanına sahip birCanvasyanıtı gönderir. Canvasyanıtınındatadeğerini aldıktan sonraonUpdategeri çağırma, web uygulamanız için özel mantık yürütebilir ve tanımlanan değişiklikleri yapabilir. Bu örnekteonUpdategeri çağırmadataöğesinden rengi okur ve ekranı maviye çevirir.

İstemci ve sunucu tarafında sipariş karşılama

Etkileşimli Tuval İşlemi oluştururken iki sipariş karşılama uygulama yolu arasından seçim yapabilirsiniz: sunucu sipariş karşılama veya istemci sipariş karşılama. Sunucu yerine getirmede öncelikle webhook gerektiren API'leri kullanırsınız. İstemci memnuniyeti sayesinde istemci taraflı API'leri ve gerekirse Canvas dışı özellikler (hesap bağlama gibi) için webhook gerektiren API'leri kullanabilirsiniz.

Proje oluşturma aşamasında sunucu webhook'u karşılama özelliğiyle derleme yapmayı seçerseniz web uygulamasını güncellemek ve ikisi arasındaki iletişimi yönetmek için konuşma mantığı ve istemci taraflı JavaScript'i işlemek amacıyla bir webhook dağıtmanız gerekir.

İstemci karşılama (şu anda Geliştirici Önizlemesi ile kullanılabiliyor) ile derlemeyi tercih ederseniz Action'ınızın mantığını yalnızca web uygulamasında oluşturmak için yeni istemci tarafı API'lerini kullanabilirsiniz. Böylece geliştirme deneyimi basitleştirilir, görüşme sırasındaki geçişler arasındaki gecikme azalır ve cihaz üzerinde özellikleri kullanabilirsiniz. Gerekirse istemciden sunucu tarafı mantığına da geçebilirsiniz.

İstemci taraflı özellikler hakkında daha fazla bilgi için İstemci tarafı karşılama ile derleme bölümüne bakın.

Sonraki adımlar

Etkileşimli Tuval için web uygulaması oluşturmayı öğrenmek üzere Web uygulamaları'na bakın.

Etkileşimli Tuval İşleminin kodunu görmek için GitHub'daki örneğe bakın.