在 Dialogflow 中探索

按一下 [繼續],將回應範例匯入 Dialogflow。接著,請按照 部署及測試範例的步驟:

- 輸入虛擬服務專員名稱,並為範例建立新的 Dialogflow 代理程式。

- 代理程式匯入完畢後,按一下「Go to agent」。

- 在主要導覽選單中,前往「Fulfillment」(執行要求)。

- 啟用「Inline Editor」(內嵌編輯器),然後按一下 [Deploy] (部署)。編輯器包含範例 再也不是件繁重乏味的工作

- 在主要導覽選單中,前往「Integrations」(整合),然後按一下「Google」 Google 助理。

- 在出現的互動視窗中,啟用「自動預覽變更」,然後按一下「測試」 以開啟動作模擬工具

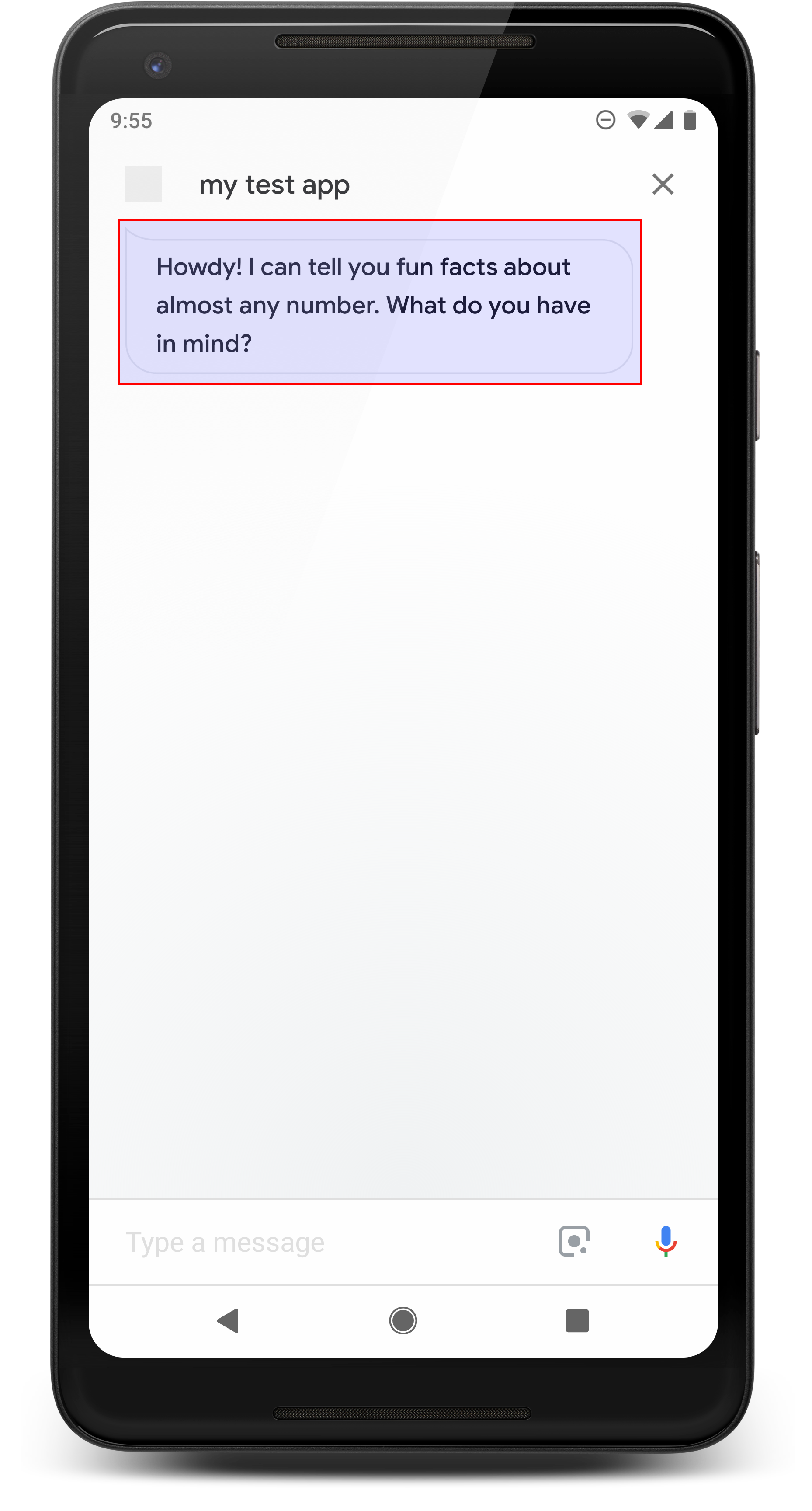

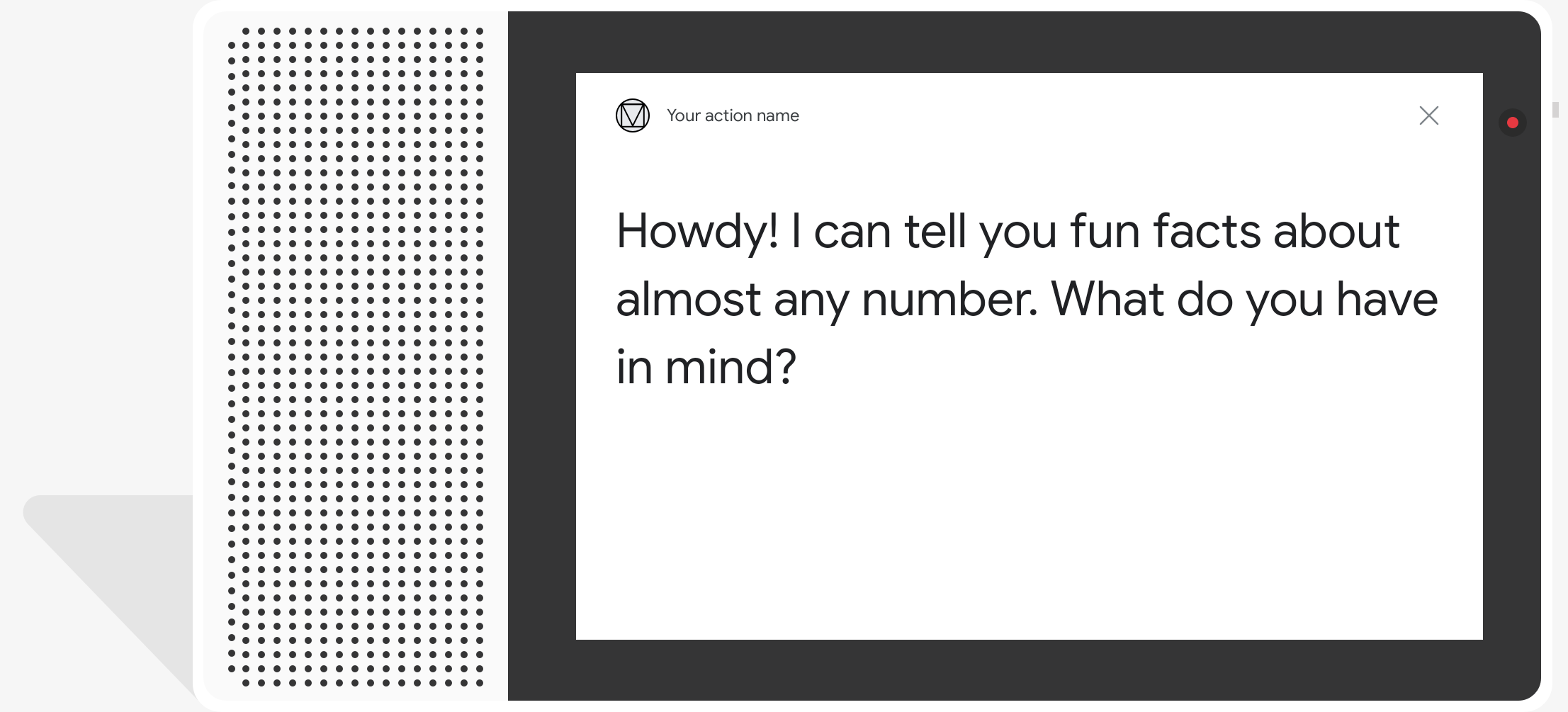

- 在模擬工具中輸入

Talk to my test app即可測試範例!

簡單的回覆會以即時通訊泡泡形式顯示,並使用文字轉語音 (TTS) 或語音合成標記語言 (SSML)。

根據預設,系統會使用 TTS 文字做為即時通訊泡泡內容。如果畫面顯示 文字符合您的需求,因此在即時通訊中無須指定任何顯示文字 泡泡。

或是參閱對話設計指南,瞭解 如何將這些視覺元素整合至動作中。

屬性

簡易回應具有下列規定和選填 您可以設定下列屬性:

- 支援具有

actions.capability.AUDIO_OUTPUT或actions.capability.SCREEN_OUTPUT功能。 每個即時通訊泡泡上限為 640 個字元。超過這個上限的字串 在第一個文字斷行 (或空白字元) 的最前 640 個字元。

即時通訊泡泡內容必須拼音,或是完整轉錄稿 TTS/SSML 輸出內容。幫助使用者清楚掌握您的發言內容,並提高回覆次數 不同條件的理解能力

每個回合最多兩個即時通訊泡泡。

提交給 Google 的即時通訊頭像 (標誌) 必須為 192x192 像素, 且不可為動畫。

程式碼範例

Node.js

app.intent('Simple Response', (conv) => { conv.ask(new SimpleResponse({ speech: `Here's an example of a simple response. ` + `Which type of response would you like to see next?`, text: `Here's a simple response. ` + `Which response would you like to see next?`, })); });

Java

@ForIntent("Simple Response") public ActionResponse welcome(ActionRequest request) { ResponseBuilder responseBuilder = getResponseBuilder(request); responseBuilder.add( new SimpleResponse() .setTextToSpeech( "Here's an example of a simple response. " + "Which type of response would you like to see next?") .setDisplayText( "Here's a simple response. Which response would you like to see next?")); return responseBuilder.build(); }

Node.js

conv.ask(new SimpleResponse({ speech: `Here's an example of a simple response. ` + `Which type of response would you like to see next?`, text: `Here's a simple response. ` + `Which response would you like to see next?`, }));

Java

ResponseBuilder responseBuilder = getResponseBuilder(request); responseBuilder.add( new SimpleResponse() .setTextToSpeech( "Here's an example of a simple response. " + "Which type of response would you like to see next?") .setDisplayText( "Here's a simple response. Which response would you like to see next?")); return responseBuilder.build();

JSON

請注意,下列 JSON 會說明 Webhook 回應。

{ "payload": { "google": { "expectUserResponse": true, "richResponse": { "items": [ { "simpleResponse": { "textToSpeech": "Here's an example of a simple response. Which type of response would you like to see next?", "displayText": "Here's a simple response. Which response would you like to see next?" } } ] } } } }

JSON

請注意,下列 JSON 會說明 Webhook 回應。

{ "expectUserResponse": true, "expectedInputs": [ { "possibleIntents": [ { "intent": "actions.intent.TEXT" } ], "inputPrompt": { "richInitialPrompt": { "items": [ { "simpleResponse": { "textToSpeech": "Here's an example of a simple response. Which type of response would you like to see next?", "displayText": "Here's a simple response. Which response would you like to see next?" } } ] } } } ] }

SSML 和聲音

在回應中使用 SSML 和音效,就能讓模型更漂亮、 使用者體驗下列程式碼片段說明如何建立回應 模型使用 SSML:

Node.js

app.intent('SSML', (conv) => { conv.ask(`<speak>` + `Here are <say-as interpet-as="characters">SSML</say-as> examples.` + `Here is a buzzing fly ` + `<audio src="https://actions.google.com/sounds/v1/animals/buzzing_fly.ogg"></audio>` + `and here's a short pause <break time="800ms"/>` + `</speak>`); conv.ask('Which response would you like to see next?'); });

Java

@ForIntent("SSML") public ActionResponse ssml(ActionRequest request) { ResponseBuilder responseBuilder = getResponseBuilder(request); responseBuilder.add( "<speak>" + "Here are <say-as interpet-as=\"characters\">SSML</say-as> examples." + "Here is a buzzing fly " + "<audio src=\"https://actions.google.com/sounds/v1/animals/buzzing_fly.ogg\"></audio>" + "and here's a short pause <break time=\"800ms\"/>" + "</speak>"); return responseBuilder.build(); }

Node.js

conv.ask(`<speak>` + `Here are <say-as interpet-as="characters">SSML</say-as> examples.` + `Here is a buzzing fly ` + `<audio src="https://actions.google.com/sounds/v1/animals/buzzing_fly.ogg"></audio>` + `and here's a short pause <break time="800ms"/>` + `</speak>`); conv.ask('Which response would you like to see next?');

Java

ResponseBuilder responseBuilder = getResponseBuilder(request); responseBuilder.add( "<speak>" + "Here are <say-as interpet-as=\"characters\">SSML</say-as> examples." + "Here is a buzzing fly " + "<audio src=\"https://actions.google.com/sounds/v1/animals/buzzing_fly.ogg\"></audio>" + "and here's a short pause <break time=\"800ms\"/>" + "</speak>"); return responseBuilder.build();

JSON

請注意,下列 JSON 會說明 Webhook 回應。

{ "payload": { "google": { "expectUserResponse": true, "richResponse": { "items": [ { "simpleResponse": { "textToSpeech": "<speak>Here are <say-as interpet-as=\"characters\">SSML</say-as> examples.Here is a buzzing fly <audio src=\"https://actions.google.com/sounds/v1/animals/buzzing_fly.ogg\"></audio>and here's a short pause <break time=\"800ms\"/></speak>" } }, { "simpleResponse": { "textToSpeech": "Which response would you like to see next?" } } ] } } } }

JSON

請注意,下列 JSON 會說明 Webhook 回應。

{ "expectUserResponse": true, "expectedInputs": [ { "possibleIntents": [ { "intent": "actions.intent.TEXT" } ], "inputPrompt": { "richInitialPrompt": { "items": [ { "simpleResponse": { "textToSpeech": "<speak>Here are <say-as interpet-as=\"characters\">SSML</say-as> examples.Here is a buzzing fly <audio src=\"https://actions.google.com/sounds/v1/animals/buzzing_fly.ogg\"></audio>and here's a short pause <break time=\"800ms\"/></speak>" } }, { "simpleResponse": { "textToSpeech": "Which response would you like to see next?" } } ] } } } ] }

詳情請參閱 SSML 參考說明文件。

音效庫

YouTube 音效庫提供了各式各樣的免費簡短音效。這些 音訊會為您代管,因此只要將音訊加入 SSML 即可。