Page Summary

-

Rich Responses in Dialogflow and Actions on Google enhance user interactions with visual and audio elements, including basic cards, suggestion chips, browsing carousels, media responses, and table cards.

-

Basic Cards display concise information and are often paired with suggestion chips to continue the conversation.

-

Browsing Carousels allow users to scroll through linked web content, and suggestion chips are recommended for ongoing interaction after the carousel is sent.

-

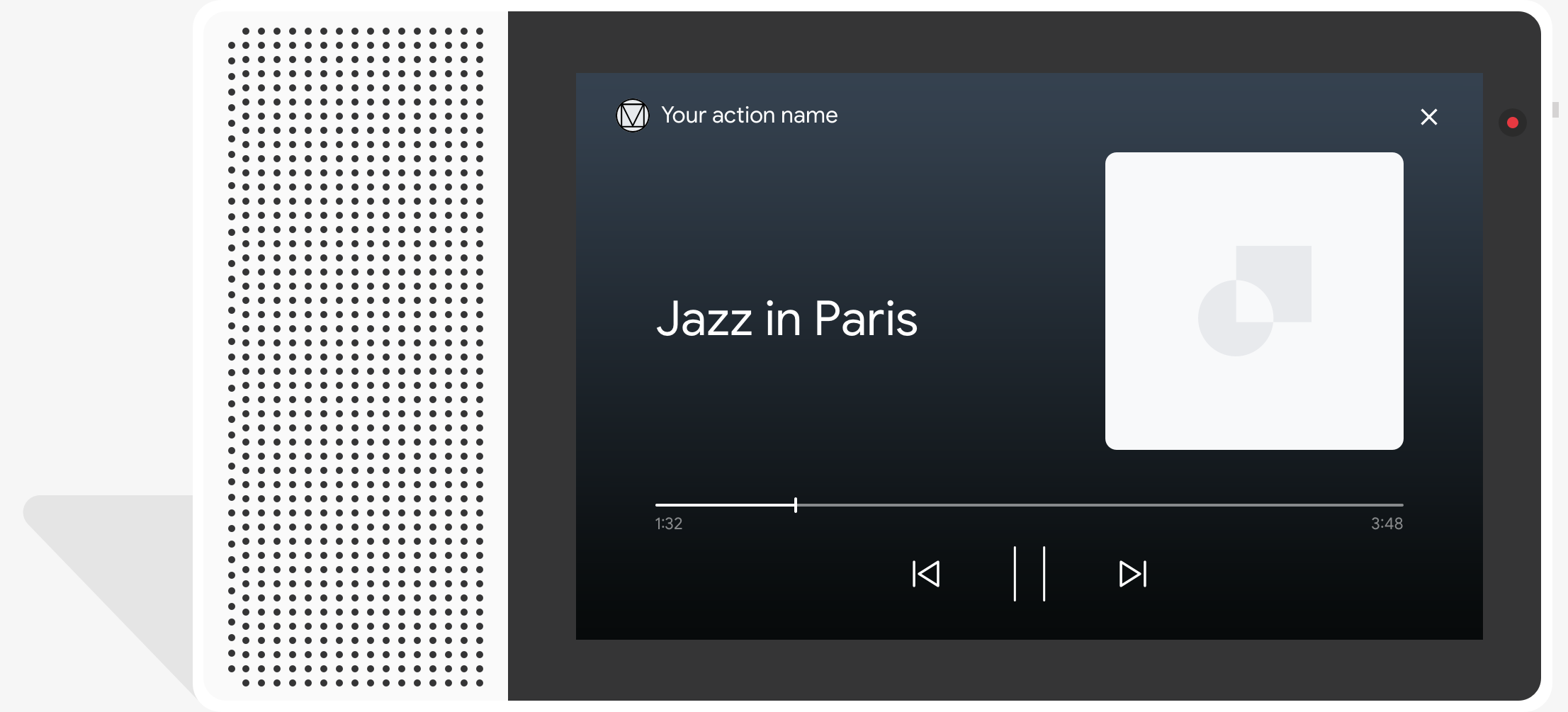

Media Responses enable Actions to play audio content with playback controls and require handling the

actions.intent.MEDIA_STATUSintent after playback. -

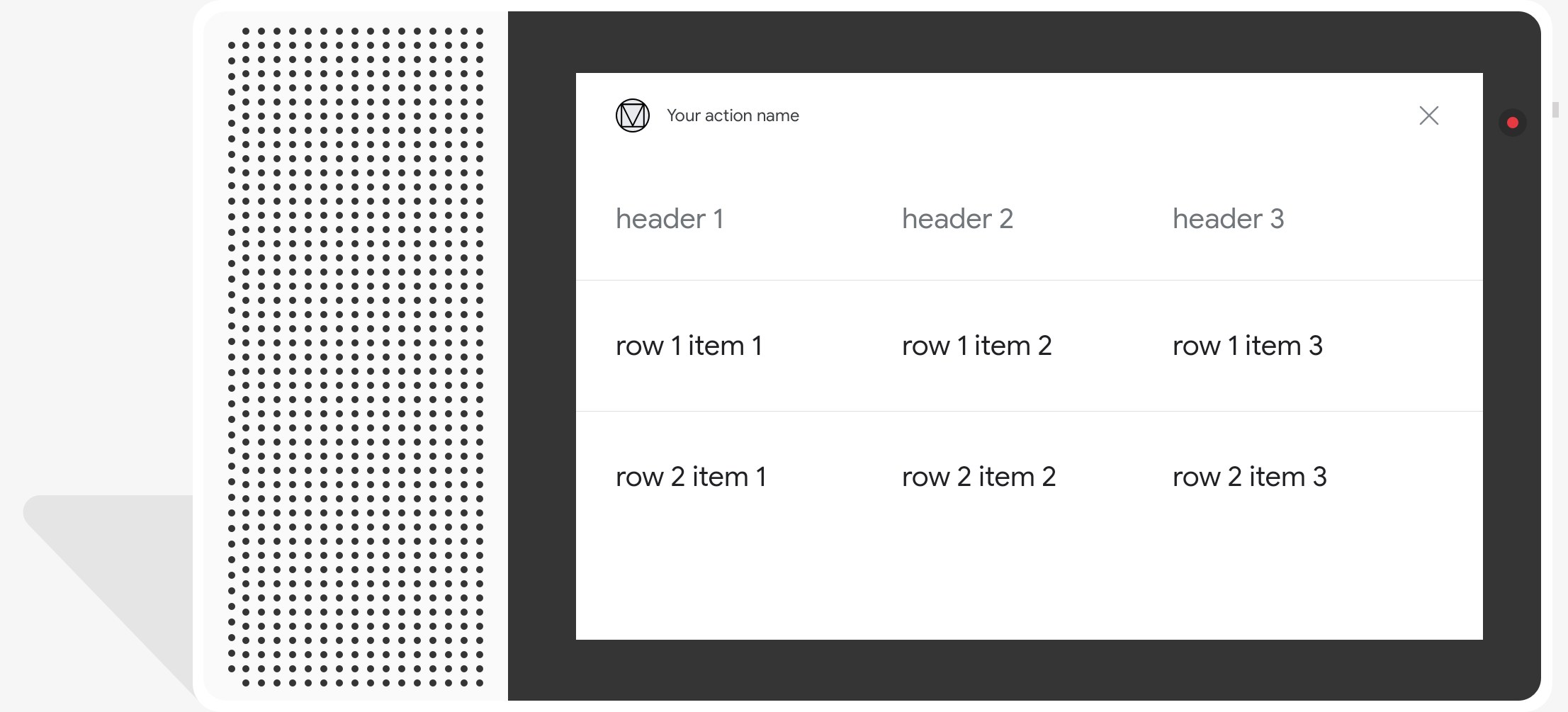

Table Cards display static tabular data and are supported on devices with screen output, allowing customization of title, subtitle, image, columns, rows, and a single button.

Explore in Dialogflow

Click Continue to import our Responses sample in Dialogflow. Then, follow the steps below to deploy and test the sample:

- Enter an agent name and create a new Dialogflow agent for the sample.

- After the agent is done importing, click Go to agent.

- From the main navigation menu, go to Fulfillment.

- Enable the Inline Editor, then click Deploy. The editor contains the sample code.

- From the main navigation menu, go to Integrations, then click Google Assistant.

- In the modal window that appears, enable Auto-preview changes and click Test to open the Actions simulator.

- In the simulator, enter

Talk to my test appto test the sample!

Use a rich response if you want to display visual elements to enhance user interactions with your Action. These visual elements can provide hints on how to continue a conversation.

Rich responses can appear on screen-only or audio and screen experiences. They can contain the following components:

- One or two simple responses (chat bubbles).

- An optional basic card.

- Optional suggestion chips.

- An optional link-out chip.

You can also review our conversation design guidelines to learn how to incorporate these visual elements in your Action.

Properties

Rich responses have the following requirements and optional properties that you can configure:

- Supported on surfaces with the

actions.capability.SCREEN_OUTPUTcapability. - The first item in a rich response must be a simple response.

- At most two simple responses.

- At most one basic card or

StructuredResponse. - At most 8 suggestion chips.

- Suggestion chips are not allowed in a

FinalResponse - Linking out to the web from smart displays is currently not supported.

The following sections show you how to build various types of rich responses.

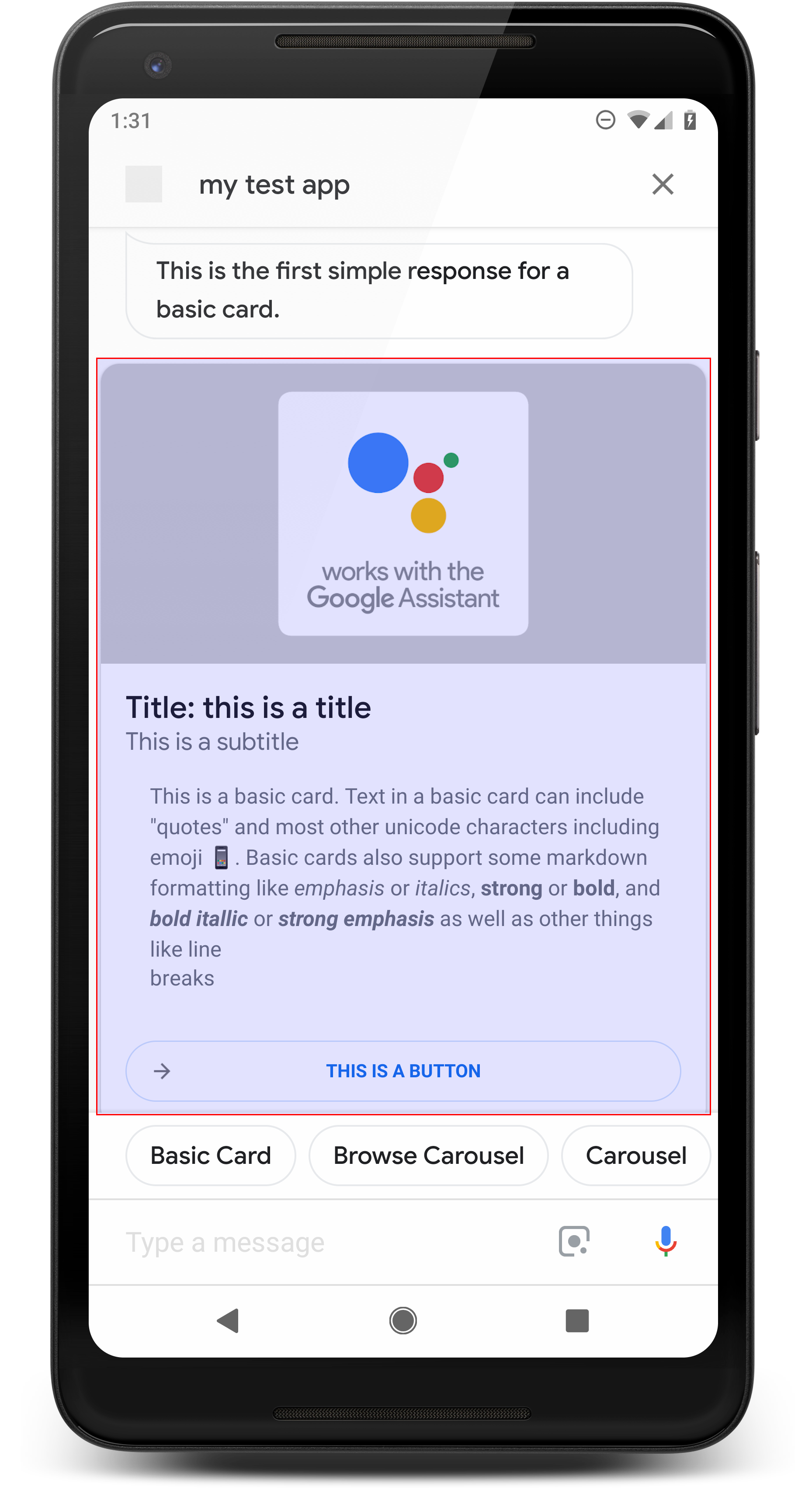

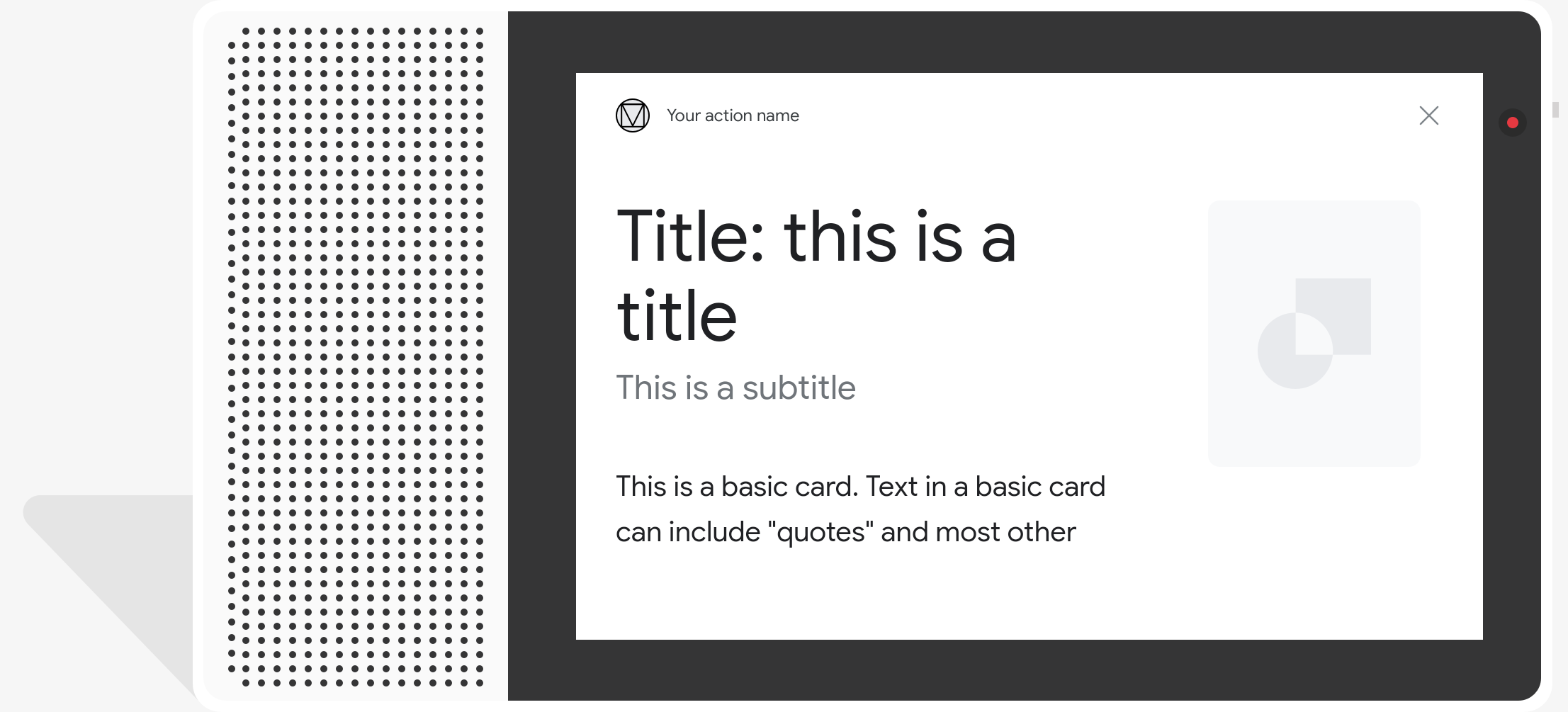

Basic card

A basic card displays information that can include the following:

- Image

- Title

- Sub-title

- Text body

- Link button

- Border

Use basic cards mainly for display purposes. They are designed to be concise, to present key (or summary) information to users, and to allow users to learn more if you choose (using a weblink).

In most situations, you should add suggestion chips below the cards to continue or pivot the conversation.

Avoid repeating the information presented in the card in the chat bubble at all costs.

Properties

The basic card response type has the following requirements and optional properties that you can configure:

- Supported on surfaces with the

actions.capability.SCREEN_OUTPUTcapability. - Formatted text (required if there's no image)

- Plain text by default.

- Must not contain a link.

- 10 line limit with an image, 15 line limit without an image. This is about 500 (with image) or 750 (without image) characters. Smaller screen phones also truncate text earlier than larger screen phones. If text contains too many lines, it's truncated at the last word break with an ellipses.

- A limited subset of markdown is supported:

- New line with a double space followed by \n

**bold***italics*

- Image (required if there's no formatted text)

- All images forced to be 192 dp tall.

- If the image's aspect ratio is different than the screen, the image is centered with gray bars on either vertical or horizontal edges.

- Image source is a URL.

- Motion GIFs are allowed.

Optional

- Title

- Plain text.

- Fixed font and size.

- At most one line; extra characters are truncated.

- The card height collapses if no title is specified.

- Sub-title

- Plain text.

- Fixed font and font size.

- At most one line; extra characters are truncated.

- The card height collapses if no subtitle is specified.

- Link button

- Link title is required

- At most one link

- Links to sites outside the developer's domain are allowed.

- Link text cannot be misleading. This is checked in the approval process.

- A basic card has no interaction capabilities without a link. Tapping on the link sends the user to the link, while the main body of the card remains inactive.

- Border

- The border between the card and the image container can be adjusted to customize the presentation of your basic card.

- Configured by setting the JSON string property

imageDisplayOptions

Sample code

Node.js

app.intent('Basic Card', (conv) => { if (!conv.screen) { conv.ask('Sorry, try this on a screen device or select the ' + 'phone surface in the simulator.'); conv.ask('Which response would you like to see next?'); return; } conv.ask(`Here's an example of a basic card.`); conv.ask(new BasicCard({ text: `This is a basic card. Text in a basic card can include "quotes" and most other unicode characters including emojis. Basic cards also support some markdown formatting like *emphasis* or _italics_, **strong** or __bold__, and ***bold itallic*** or ___strong emphasis___ as well as other things like line \nbreaks`, // Note the two spaces before '\n' required for // a line break to be rendered in the card. subtitle: 'This is a subtitle', title: 'Title: this is a title', buttons: new Button({ title: 'This is a button', url: 'https://assistant.google.com/', }), image: new Image({ url: 'https://storage.googleapis.com/actionsresources/logo_assistant_2x_64dp.png', alt: 'Image alternate text', }), display: 'CROPPED', })); conv.ask('Which response would you like to see next?'); });

Java

@ForIntent("Basic Card") public ActionResponse basicCard(ActionRequest request) { ResponseBuilder responseBuilder = getResponseBuilder(request); if (!request.hasCapability(Capability.SCREEN_OUTPUT.getValue())) { return responseBuilder .add("Sorry, try ths on a screen device or select the phone surface in the simulator.") .add("Which response would you like to see next?") .build(); } // Prepare formatted text for card String text = "This is a basic card. Text in a basic card can include \"quotes\" and\n" + " most other unicode characters including emoji \uD83D\uDCF1. Basic cards also support\n" + " some markdown formatting like *emphasis* or _italics_, **strong** or\n" + " __bold__, and ***bold itallic*** or ___strong emphasis___ as well as other\n" + " things like line \\nbreaks"; // Note the two spaces before '\n' required for // a line break to be rendered in the card. responseBuilder .add("Here's an example of a basic card.") .add( new BasicCard() .setTitle("Title: this is a title") .setSubtitle("This is a subtitle") .setFormattedText(text) .setImage( new Image() .setUrl( "https://storage.googleapis.com/actionsresources/logo_assistant_2x_64dp.png") .setAccessibilityText("Image alternate text")) .setImageDisplayOptions("CROPPED") .setButtons( new ArrayList<Button>( Arrays.asList( new Button() .setTitle("This is a Button") .setOpenUrlAction( new OpenUrlAction().setUrl("https://assistant.google.com")))))) .add("Which response would you like to see next?"); return responseBuilder.build(); }

Node.js

if (!conv.screen) { conv.ask('Sorry, try this on a screen device or select the ' + 'phone surface in the simulator.'); conv.ask('Which response would you like to see next?'); return; } conv.ask(`Here's an example of a basic card.`); conv.ask(new BasicCard({ text: `This is a basic card. Text in a basic card can include "quotes" and most other unicode characters including emojis. Basic cards also support some markdown formatting like *emphasis* or _italics_, **strong** or __bold__, and ***bold itallic*** or ___strong emphasis___ as well as other things like line \nbreaks`, // Note the two spaces before '\n' required for // a line break to be rendered in the card. subtitle: 'This is a subtitle', title: 'Title: this is a title', buttons: new Button({ title: 'This is a button', url: 'https://assistant.google.com/', }), image: new Image({ url: 'https://storage.googleapis.com/actionsresources/logo_assistant_2x_64dp.png', alt: 'Image alternate text', }), display: 'CROPPED', })); conv.ask('Which response would you like to see next?');

Java

ResponseBuilder responseBuilder = getResponseBuilder(request); if (!request.hasCapability(Capability.SCREEN_OUTPUT.getValue())) { return responseBuilder .add("Sorry, try ths on a screen device or select the phone surface in the simulator.") .add("Which response would you like to see next?") .build(); } // Prepare formatted text for card String text = "This is a basic card. Text in a basic card can include \"quotes\" and\n" + " most other unicode characters including emoji \uD83D\uDCF1. Basic cards also support\n" + " some markdown formatting like *emphasis* or _italics_, **strong** or\n" + " __bold__, and ***bold itallic*** or ___strong emphasis___ as well as other\n" + " things like line \\nbreaks"; // Note the two spaces before '\n' required for // a line break to be rendered in the card. responseBuilder .add("Here's an example of a basic card.") .add( new BasicCard() .setTitle("Title: this is a title") .setSubtitle("This is a subtitle") .setFormattedText(text) .setImage( new Image() .setUrl( "https://storage.googleapis.com/actionsresources/logo_assistant_2x_64dp.png") .setAccessibilityText("Image alternate text")) .setImageDisplayOptions("CROPPED") .setButtons( new ArrayList<Button>( Arrays.asList( new Button() .setTitle("This is a Button") .setOpenUrlAction( new OpenUrlAction().setUrl("https://assistant.google.com")))))) .add("Which response would you like to see next?"); return responseBuilder.build();

JSON

Note that the JSON below describes a webhook response.

{ "payload": { "google": { "expectUserResponse": true, "richResponse": { "items": [ { "simpleResponse": { "textToSpeech": "Here's an example of a basic card." } }, { "basicCard": { "title": "Title: this is a title", "subtitle": "This is a subtitle", "formattedText": "This is a basic card. Text in a basic card can include \"quotes\" and\n most other unicode characters including emojis. Basic cards also support\n some markdown formatting like *emphasis* or _italics_, **strong** or\n __bold__, and ***bold itallic*** or ___strong emphasis___ as well as other\n things like line \nbreaks", "image": { "url": "https://storage.googleapis.com/actionsresources/logo_assistant_2x_64dp.png", "accessibilityText": "Image alternate text" }, "buttons": [ { "title": "This is a button", "openUrlAction": { "url": "https://assistant.google.com/" } } ], "imageDisplayOptions": "CROPPED" } }, { "simpleResponse": { "textToSpeech": "Which response would you like to see next?" } } ] } } } }

JSON

Note that the JSON below describes a webhook response.

{ "expectUserResponse": true, "expectedInputs": [ { "possibleIntents": [ { "intent": "actions.intent.TEXT" } ], "inputPrompt": { "richInitialPrompt": { "items": [ { "simpleResponse": { "textToSpeech": "Here's an example of a basic card." } }, { "basicCard": { "title": "Title: this is a title", "subtitle": "This is a subtitle", "formattedText": "This is a basic card. Text in a basic card can include \"quotes\" and\n most other unicode characters including emojis. Basic cards also support\n some markdown formatting like *emphasis* or _italics_, **strong** or\n __bold__, and ***bold itallic*** or ___strong emphasis___ as well as other\n things like line \nbreaks", "image": { "url": "https://storage.googleapis.com/actionsresources/logo_assistant_2x_64dp.png", "accessibilityText": "Image alternate text" }, "buttons": [ { "title": "This is a button", "openUrlAction": { "url": "https://assistant.google.com/" } } ], "imageDisplayOptions": "CROPPED" } }, { "simpleResponse": { "textToSpeech": "Which response would you like to see next?" } } ] } } } ] }

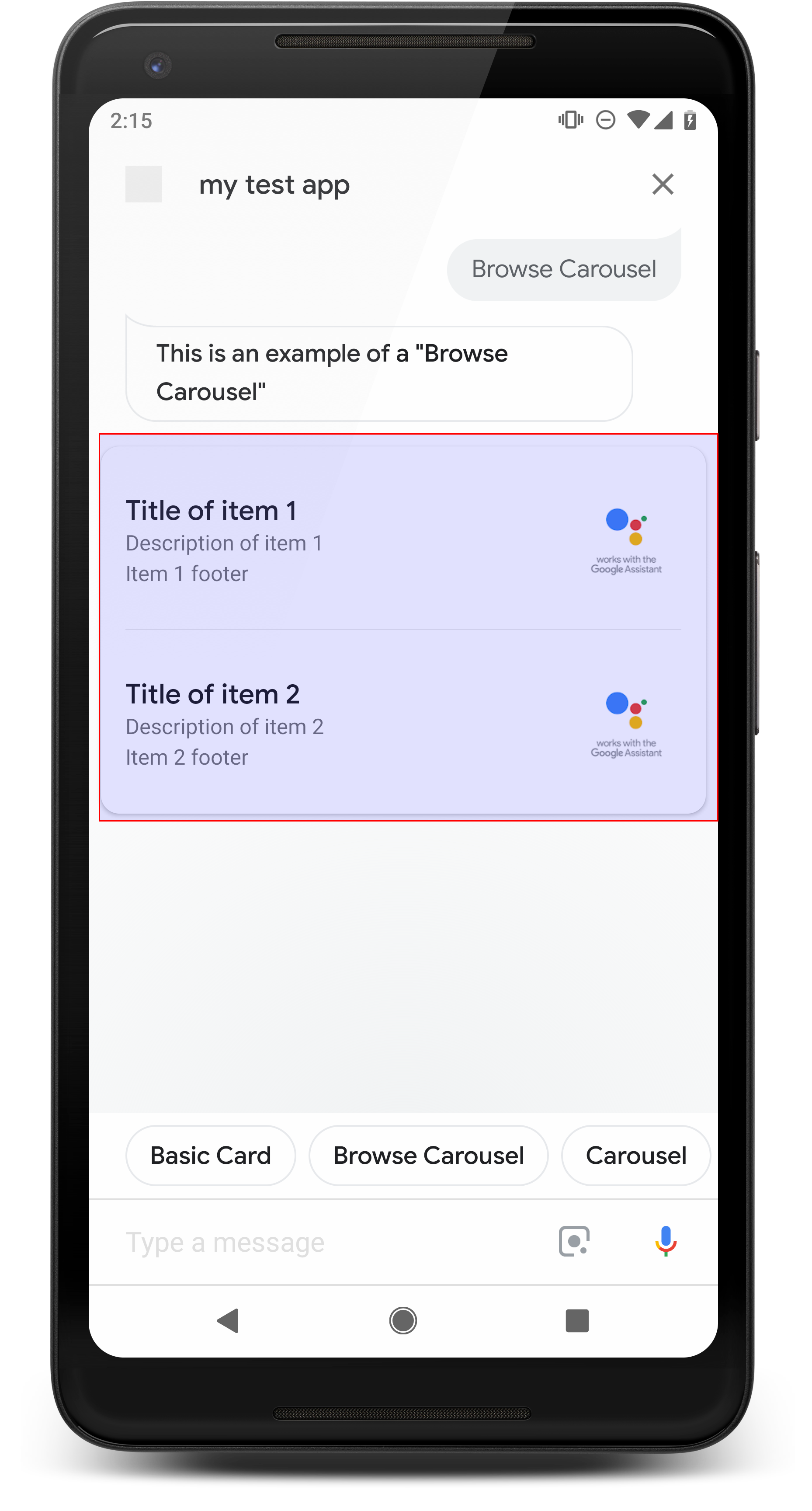

Browsing carousel

A browsing carousel is a rich response that allows users to scroll vertically and select a tile in a collection. Browsing carousels are designed specifically for web content by opening the selected tile in a web browser (or an AMP browser if all tiles are AMP-enabled). The browsing carousel also persists on the user's Assistant surface for browsing later.

Properties

The browsing carousel response type has the following requirements and optional properties that you can configure:

- Supported on surfaces that have both the

actions.capability.SCREEN_OUTPUTandactions.capability.WEB_BROWSERcapabilities. This response type is currently not available on smart displays. - Browsing carousel

- Maximum of ten tiles.

- Minimum of two tiles.

- Tiles in the carousel must all link to web content (AMP content

recommended).

- In order for the user to be taken to an AMP viewer, the

urlHintTypeon AMP content tiles must be set to "AMP_CONTENT".

- In order for the user to be taken to an AMP viewer, the

- Browsing carousel tiles

- Tile consistency (required):

- All tiles in a browsing carousel must have the same components. For example, if one tile has an image field, the rest of the tiles in the carousel must also have image fields.

- If all tiles in the browsing carousel link to AMP-enabled content, the user is taken to an AMP browser with additional functionality. If any tile links to non-AMP content, then all tiles direct users to a web browser.

- Image (optional)

- Image is forced to be 128 dp tall x 232 dp wide.

- If the image aspect ratio doesn't match the image bounding box, then the image is centered with bars on either side. On smartphones, the image is centered in a square with rounded corners.

- If an image link is broken then a placeholder image is used instead.

- Alt-text is required on an image.

- Title (required)

- Same formatting options as the basic text card.

- Titles must be unique (to support voice selection).

- Maximum of two lines of text.

- Font size 16 sp.

- Description (optional)

- Same formatting options as the basic text card.

- Maximum of four lines of text.

- Truncated with ellipses (...)

- Font size 14sp, gray color.

- Footer (optional)

- Fixed font and font size.

- Maximum of one line of text.

- Truncated with ellipses (...)

- Anchored at the bottom, so tiles with fewer lines of body text may have white space above the sub-text.

- Font size 14sp, gray color.

- Tile consistency (required):

- Interaction

- The user can scroll vertically to view items.

- Tap card: Tapping an item takes the user to a browser, displaying the linked page.

- Voice input

- Mic behavior

- The mic doesn't re-open when a browsing carousel is sent to the user.

- The user can still tap the mic or invoke the Assistant ("OK Google") to re-open the mic.

- Mic behavior

Guidance

By default, the mic remains closed after a browse carousel is sent. If you want to continue the conversation afterwards, we strongly recommend adding suggestion chips below the carousel.

Never repeat the options presented in the list as suggestion chips. Chips in this context are used to pivot the conversation (not for choice selection).

Same as with lists, the chat bubble that accompanies the carousel card is a subset of the audio (TTS/SSML). The audio (TTS/SSML) here integrates the first tile in the carousel, and we also strongly discourage reading all the elements from the carousel. It's best to mention the first item and the reason why it's there (for example, the most popular, the most recently purchased, the most talked about).

Sample code

Node.js

app.intent('Browsing Carousel', (conv) => { if (!conv.screen || !conv.surface.capabilities.has('actions.capability.WEB_BROWSER')) { conv.ask('Sorry, try this on a phone or select the ' + 'phone surface in the simulator.'); conv.ask('Which response would you like to see next?'); return; } conv.ask(`Here's an example of a browsing carousel.`); conv.ask(new BrowseCarousel({ items: [ new BrowseCarouselItem({ title: 'Title of item 1', url: 'https://example.com', description: 'Description of item 1', image: new Image({ url: 'https://storage.googleapis.com/actionsresources/logo_assistant_2x_64dp.png', alt: 'Image alternate text', }), footer: 'Item 1 footer', }), new BrowseCarouselItem({ title: 'Title of item 2', url: 'https://example.com', description: 'Description of item 2', image: new Image({ url: 'https://storage.googleapis.com/actionsresources/logo_assistant_2x_64dp.png', alt: 'Image alternate text', }), footer: 'Item 2 footer', }), ], })); });

Java

@ForIntent("Browsing Carousel") public ActionResponse browseCarousel(ActionRequest request) { ResponseBuilder responseBuilder = getResponseBuilder(request); if (!request.hasCapability(Capability.SCREEN_OUTPUT.getValue()) || !request.hasCapability(Capability.WEB_BROWSER.getValue())) { return responseBuilder .add("Sorry, try this on a phone or select the phone surface in the simulator.") .add("Which response would you like to see next?") .build(); } responseBuilder .add("Here's an example of a browsing carousel.") .add( new CarouselBrowse() .setItems( new ArrayList<CarouselBrowseItem>( Arrays.asList( new CarouselBrowseItem() .setTitle("Title of item 1") .setDescription("Description of item 1") .setOpenUrlAction(new OpenUrlAction().setUrl("https://example.com")) .setImage( new Image() .setUrl( "https://storage.googleapis.com/actionsresources/logo_assistant_2x_64dp.png") .setAccessibilityText("Image alternate text")) .setFooter("Item 1 footer"), new CarouselBrowseItem() .setTitle("Title of item 2") .setDescription("Description of item 2") .setOpenUrlAction(new OpenUrlAction().setUrl("https://example.com")) .setImage( new Image() .setUrl( "https://storage.googleapis.com/actionsresources/logo_assistant_2x_64dp.png") .setAccessibilityText("Image alternate text")) .setFooter("Item 2 footer"))))); return responseBuilder.build(); }

Node.js

if (!conv.screen || !conv.surface.capabilities.has('actions.capability.WEB_BROWSER')) { conv.ask('Sorry, try this on a phone or select the ' + 'phone surface in the simulator.'); conv.ask('Which response would you like to see next?'); return; } conv.ask(`Here's an example of a browsing carousel.`); conv.ask(new BrowseCarousel({ items: [ new BrowseCarouselItem({ title: 'Title of item 1', url: 'https://example.com', description: 'Description of item 1', image: new Image({ url: 'https://storage.googleapis.com/actionsresources/logo_assistant_2x_64dp.png', alt: 'Image alternate text', }), footer: 'Item 1 footer', }), new BrowseCarouselItem({ title: 'Title of item 2', url: 'https://example.com', description: 'Description of item 2', image: new Image({ url: 'https://storage.googleapis.com/actionsresources/logo_assistant_2x_64dp.png', alt: 'Image alternate text', }), footer: 'Item 2 footer', }), ], }));

Java

ResponseBuilder responseBuilder = getResponseBuilder(request); if (!request.hasCapability(Capability.SCREEN_OUTPUT.getValue()) || !request.hasCapability(Capability.WEB_BROWSER.getValue())) { return responseBuilder .add("Sorry, try this on a phone or select the phone surface in the simulator.") .add("Which response would you like to see next?") .build(); } responseBuilder .add("Here's an example of a browsing carousel.") .add( new CarouselBrowse() .setItems( new ArrayList<CarouselBrowseItem>( Arrays.asList( new CarouselBrowseItem() .setTitle("Title of item 1") .setDescription("Description of item 1") .setOpenUrlAction(new OpenUrlAction().setUrl("https://example.com")) .setImage( new Image() .setUrl( "https://storage.googleapis.com/actionsresources/logo_assistant_2x_64dp.png") .setAccessibilityText("Image alternate text")) .setFooter("Item 1 footer"), new CarouselBrowseItem() .setTitle("Title of item 2") .setDescription("Description of item 2") .setOpenUrlAction(new OpenUrlAction().setUrl("https://example.com")) .setImage( new Image() .setUrl( "https://storage.googleapis.com/actionsresources/logo_assistant_2x_64dp.png") .setAccessibilityText("Image alternate text")) .setFooter("Item 2 footer"))))); return responseBuilder.build();

JSON

Note that the JSON below describes a webhook response.

{ "payload": { "google": { "expectUserResponse": true, "richResponse": { "items": [ { "simpleResponse": { "textToSpeech": "Here's an example of a browsing carousel." } }, { "carouselBrowse": { "items": [ { "title": "Title of item 1", "openUrlAction": { "url": "https://example.com" }, "description": "Description of item 1", "footer": "Item 1 footer", "image": { "url": "https://storage.googleapis.com/actionsresources/logo_assistant_2x_64dp.png", "accessibilityText": "Image alternate text" } }, { "title": "Title of item 2", "openUrlAction": { "url": "https://example.com" }, "description": "Description of item 2", "footer": "Item 2 footer", "image": { "url": "https://storage.googleapis.com/actionsresources/logo_assistant_2x_64dp.png", "accessibilityText": "Image alternate text" } } ] } } ] } } } }

JSON

Note that the JSON below describes a webhook response.

{ "expectUserResponse": true, "expectedInputs": [ { "inputPrompt": { "richInitialPrompt": { "items": [ { "simpleResponse": { "textToSpeech": "Here's an example of a browsing carousel." } }, { "carouselBrowse": { "items": [ { "description": "Description of item 1", "footer": "Item 1 footer", "image": { "accessibilityText": "Image alternate text", "url": "https://storage.googleapis.com/actionsresources/logo_assistant_2x_64dp.png" }, "openUrlAction": { "url": "https://example.com" }, "title": "Title of item 1" }, { "description": "Description of item 2", "footer": "Item 2 footer", "image": { "accessibilityText": "Image alternate text", "url": "https://storage.googleapis.com/actionsresources/logo_assistant_2x_64dp.png" }, "openUrlAction": { "url": "https://example.com" }, "title": "Title of item 2" } ] } } ] } }, "possibleIntents": [ { "intent": "actions.intent.TEXT" } ] } ] }

Handling selected item

No follow-up fulfillment is necessary for user interactions with browse carousel items, since the carousel handles the browser handoff. Keep in mind that the mic won't re-open after the user interacts with a browse carousel item, so you should either end the conversation or include suggestion chips in your response as per the guidance above.

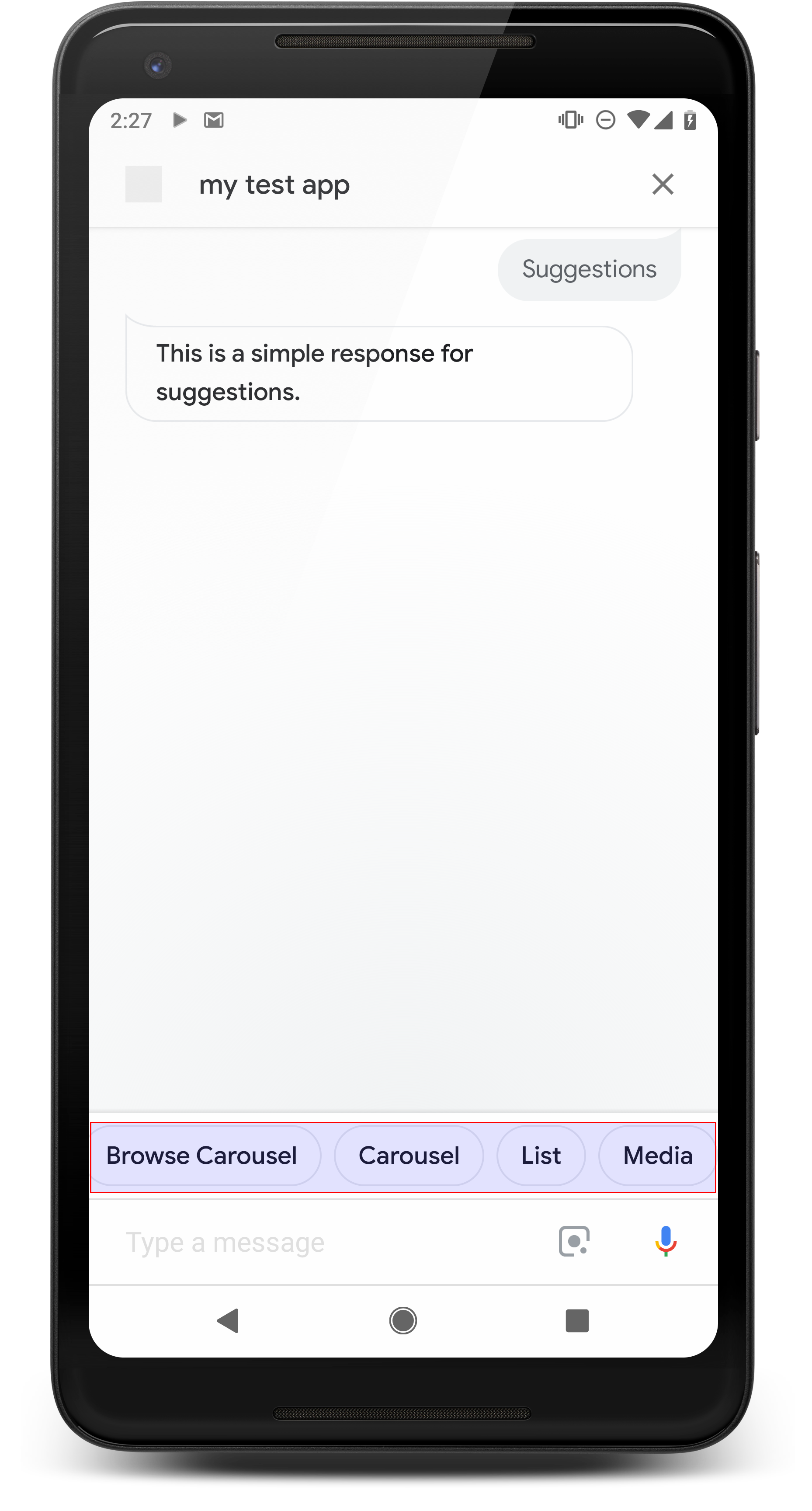

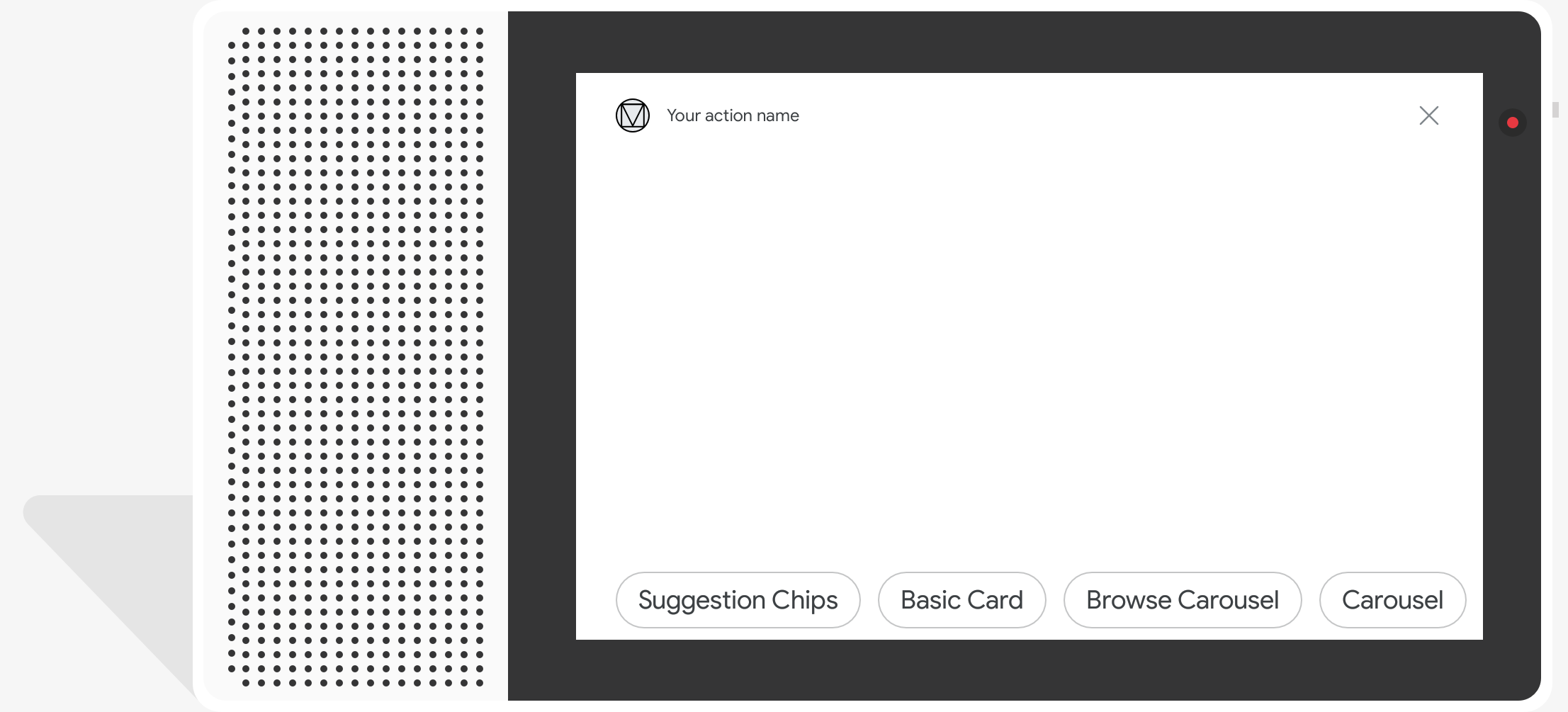

Suggestion chips

Use suggestion chips to hint at responses to continue or pivot the conversation. If during the conversation there is a primary call for action, consider listing that as the first suggestion chip.

Whenever possible, you should incorporate one key suggestion as part of the chat bubble, but do so only if the response or chat conversation feels natural.

Properties

Suggestion chips have the following requirements and optional properties that you can configure:

- Supported on surfaces with the

actions.capability.SCREEN_OUTPUTcapability. - To link suggestion chips out to the web, surfaces must also have the

actions.capability.WEB_BROWSERcapability. This capability is currently not available on smart displays. - Maximum of eight chips.

- Maximum text length of 25 characters.

Supports only plain text.

Sample code

Node.js

app.intent('Suggestion Chips', (conv) => { if (!conv.screen) { conv.ask('Chips can be demonstrated on screen devices.'); conv.ask('Which response would you like to see next?'); return; } conv.ask('These are suggestion chips.'); conv.ask(new Suggestions('Suggestion 1')); conv.ask(new Suggestions(['Suggestion 2', 'Suggestion 3'])); conv.ask(new LinkOutSuggestion({ name: 'Suggestion Link', url: 'https://assistant.google.com/', })); conv.ask('Which type of response would you like to see next?'); ; });

Java

@ForIntent("Suggestion Chips") public ActionResponse suggestionChips(ActionRequest request) { ResponseBuilder responseBuilder = getResponseBuilder(request); if (!request.hasCapability(Capability.SCREEN_OUTPUT.getValue())) { return responseBuilder .add("Sorry, try ths on a screen device or select the phone surface in the simulator.") .add("Which response would you like to see next?") .build(); } responseBuilder .add("These are suggestion chips.") .addSuggestions(new String[] {"Suggestion 1", "Suggestion 2", "Suggestion 3"}) .add( new LinkOutSuggestion() .setDestinationName("Suggestion Link") .setUrl("https://assistant.google.com/")) .add("Which type of response would you like to see next?"); return responseBuilder.build(); }

Node.js

if (!conv.screen) { conv.ask('Chips can be demonstrated on screen devices.'); conv.ask('Which response would you like to see next?'); return; } conv.ask('These are suggestion chips.'); conv.ask(new Suggestions('Suggestion 1')); conv.ask(new Suggestions(['Suggestion 2', 'Suggestion 3'])); conv.ask(new LinkOutSuggestion({ name: 'Suggestion Link', url: 'https://assistant.google.com/', })); conv.ask('Which type of response would you like to see next?');

Java

ResponseBuilder responseBuilder = getResponseBuilder(request); if (!request.hasCapability(Capability.SCREEN_OUTPUT.getValue())) { return responseBuilder .add("Sorry, try ths on a screen device or select the phone surface in the simulator.") .add("Which response would you like to see next?") .build(); } responseBuilder .add("These are suggestion chips.") .addSuggestions(new String[] {"Suggestion 1", "Suggestion 2", "Suggestion 3"}) .add( new LinkOutSuggestion() .setDestinationName("Suggestion Link") .setUrl("https://assistant.google.com/")) .add("Which type of response would you like to see next?"); return responseBuilder.build();

JSON

Note that the JSON below describes a webhook response.

{ "payload": { "google": { "expectUserResponse": true, "richResponse": { "items": [ { "simpleResponse": { "textToSpeech": "These are suggestion chips." } }, { "simpleResponse": { "textToSpeech": "Which type of response would you like to see next?" } } ], "suggestions": [ { "title": "Suggestion 1" }, { "title": "Suggestion 2" }, { "title": "Suggestion 3" } ], "linkOutSuggestion": { "destinationName": "Suggestion Link", "url": "https://assistant.google.com/" } } } } }

JSON

Note that the JSON below describes a webhook response.

{ "expectUserResponse": true, "expectedInputs": [ { "possibleIntents": [ { "intent": "actions.intent.TEXT" } ], "inputPrompt": { "richInitialPrompt": { "items": [ { "simpleResponse": { "textToSpeech": "These are suggestion chips." } }, { "simpleResponse": { "textToSpeech": "Which type of response would you like to see next?" } } ], "suggestions": [ { "title": "Suggestion 1" }, { "title": "Suggestion 2" }, { "title": "Suggestion 3" } ], "linkOutSuggestion": { "destinationName": "Suggestion Link", "url": "https://assistant.google.com/" } } } } ] }

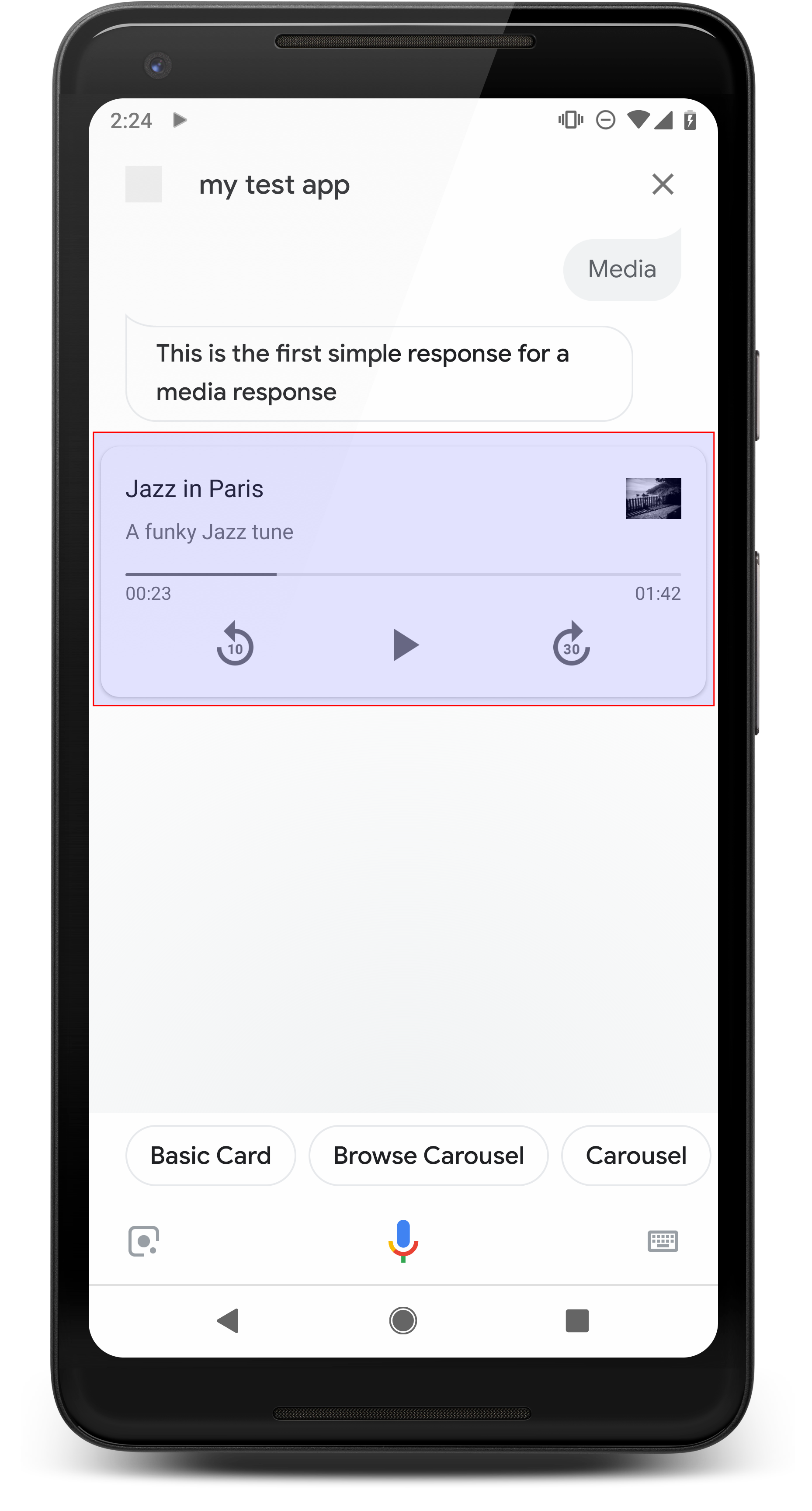

Media responses

Media responses let your Actions play audio content with a playback duration longer than the 240-second limit of SSML. The primary component of a media response is the single-track card. The card allows the user to perform these operations:

- Replay the last 10 seconds.

- Skip forward for 30 seconds.

- View the total length of the media content.

- View a progress indicator for audio playback.

- View the elapsed playback time.

Media responses support the following audio controls for voice interaction:

- “Ok Google, play.”

- “Ok Google, pause.”

- “Ok Google, stop.”

- “Ok Google, start over.”

Users can also control the volume by saying phrases like, "Hey Google, turn the volume up." or "Hey Google, set the volume to 50 percent." Intents in your Action take precedence if they handle similar training phrases. Let Assistant handle these user requests unless your Action has a specific reason to.

Properties

Media responses have the following requirements and optional properties that you can configure:

- Supported on surfaces with the

actions.capability.MEDIA_RESPONSE_AUDIOcapability. - Audio for playback must be in a correctly formatted

.mp3file. Live streaming is not supported. - The media file for playback must be specified as an HTTPS URL.

- Image (optional)

- You can optionally include an icon or an image.

- Icon

- Your icon appears as a borderless thumbnail on the right of the media player card.

- Size should be 36 x 36 dp. Larger sized images are resized to fit.

- Image

- The image container will be 192 dp tall.

- Your image appears at the top of the media player card, and spans the full width of the card. Most images will appear with bars along the top or sides.

- Motion GIFs are allowed.

- You must specify the image source as a URL.

- Alt-text is required on all images.

Behavior on surfaces

Media responses are supported on Android phones and on Google Home. The behavior of media responses depends on the surface on which users interact with your Actions.

On Android phones, users can see media responses when any of these conditions are met:

- Google Assistant is in the foreground, and the phone screen is on.

- The user leaves Google Assistant while audio is playing and returns to Google Assistant within 10 minutes of playback completion. On returning to Google Assistant, the user sees the media card and suggestion chips.

- The Assistant lets users control device volume within your conversational Action by saying things like "turn the volume up" or "set the volume to 50 percent". If you have intents that handle similar training phrases, your intents take precedence. We recommend letting the Assistant handle these user requests unless your Action has a specific reason to.

Media controls are available while the phone is locked. On Android, the controls also appear in the notification area.

Sample code

The following code sample shows how you might update your rich responses to include media.

Node.js

app.intent('Media Response', (conv) => { if (!conv.surface.capabilities .has('actions.capability.MEDIA_RESPONSE_AUDIO')) { conv.ask('Sorry, this device does not support audio playback.'); conv.ask('Which response would you like to see next?'); return; } conv.ask('This is a media response example.'); conv.ask(new MediaObject({ name: 'Jazz in Paris', url: 'https://storage.googleapis.com/automotive-media/Jazz_In_Paris.mp3', description: 'A funky Jazz tune', icon: new Image({ url: 'https://storage.googleapis.com/automotive-media/album_art.jpg', alt: 'Album cover of an ocean view', }), })); conv.ask(new Suggestions(['Basic Card', 'List', 'Carousel', 'Browsing Carousel'])); });

Java

@ForIntent("Media Response") public ActionResponse mediaResponse(ActionRequest request) { ResponseBuilder responseBuilder = getResponseBuilder(request); if (!request.hasCapability(Capability.MEDIA_RESPONSE_AUDIO.getValue())) { return responseBuilder .add("Sorry, this device does not support audio playback.") .add("Which response would you like to see next?") .build(); } responseBuilder .add("This is a media response example.") .add( new MediaResponse() .setMediaObjects( new ArrayList<MediaObject>( Arrays.asList( new MediaObject() .setName("Jazz in Paris") .setDescription("A funky Jazz tune") .setContentUrl( "https://storage.googleapis.com/automotive-media/Jazz_In_Paris.mp3") .setIcon( new Image() .setUrl( "https://storage.googleapis.com/automotive-media/album_art.jpg") .setAccessibilityText("Album cover of an ocean view"))))) .setMediaType("AUDIO")) .addSuggestions(new String[] {"Basic Card", "List", "Carousel", "Browsing Carousel"}); return responseBuilder.build(); }

Node.js

if (!conv.surface.capabilities .has('actions.capability.MEDIA_RESPONSE_AUDIO')) { conv.ask('Sorry, this device does not support audio playback.'); conv.ask('Which response would you like to see next?'); return; } conv.ask('This is a media response example.'); conv.ask(new MediaObject({ name: 'Jazz in Paris', url: 'https://storage.googleapis.com/automotive-media/Jazz_In_Paris.mp3', description: 'A funky Jazz tune', icon: new Image({ url: 'https://storage.googleapis.com/automotive-media/album_art.jpg', alt: 'Album cover of an ocean view', }), })); conv.ask(new Suggestions(['Basic Card', 'List', 'Carousel', 'Browsing Carousel']));

Java

ResponseBuilder responseBuilder = getResponseBuilder(request); if (!request.hasCapability(Capability.MEDIA_RESPONSE_AUDIO.getValue())) { return responseBuilder .add("Sorry, this device does not support audio playback.") .add("Which response would you like to see next?") .build(); } responseBuilder .add("This is a media response example.") .add( new MediaResponse() .setMediaObjects( new ArrayList<MediaObject>( Arrays.asList( new MediaObject() .setName("Jazz in Paris") .setDescription("A funky Jazz tune") .setContentUrl( "https://storage.googleapis.com/automotive-media/Jazz_In_Paris.mp3") .setIcon( new Image() .setUrl( "https://storage.googleapis.com/automotive-media/album_art.jpg") .setAccessibilityText("Album cover of an ocean view"))))) .setMediaType("AUDIO")) .addSuggestions(new String[] {"Basic Card", "List", "Carousel", "Browsing Carousel"}); return responseBuilder.build();

JSON

Note that the JSON below describes a webhook response.

{ "payload": { "google": { "expectUserResponse": true, "richResponse": { "items": [ { "simpleResponse": { "textToSpeech": "This is a media response example." } }, { "mediaResponse": { "mediaType": "AUDIO", "mediaObjects": [ { "contentUrl": "https://storage.googleapis.com/automotive-media/Jazz_In_Paris.mp3", "description": "A funky Jazz tune", "icon": { "url": "https://storage.googleapis.com/automotive-media/album_art.jpg", "accessibilityText": "Album cover of an ocean view" }, "name": "Jazz in Paris" } ] } } ], "suggestions": [ { "title": "Basic Card" }, { "title": "List" }, { "title": "Carousel" }, { "title": "Browsing Carousel" } ] } } } }

JSON

Note that the JSON below describes a webhook response.

{ "expectUserResponse": true, "expectedInputs": [ { "possibleIntents": [ { "intent": "actions.intent.TEXT" } ], "inputPrompt": { "richInitialPrompt": { "items": [ { "simpleResponse": { "textToSpeech": "This is a media response example." } }, { "mediaResponse": { "mediaType": "AUDIO", "mediaObjects": [ { "contentUrl": "https://storage.googleapis.com/automotive-media/Jazz_In_Paris.mp3", "description": "A funky Jazz tune", "icon": { "url": "https://storage.googleapis.com/automotive-media/album_art.jpg", "accessibilityText": "Album cover of an ocean view" }, "name": "Jazz in Paris" } ] } } ], "suggestions": [ { "title": "Basic Card" }, { "title": "List" }, { "title": "Carousel" }, { "title": "Browsing Carousel" } ] } } } ] }

Guidance

Your response must include a mediaResponse with a mediaType of AUDIO and

containing a mediaObject within the rich response's item array. A media

response supports a single media object. A media object must include the content

URL of the audio file. A media object may optionally include a name, sub-text

(description), and an icon or image URL.

On phones and Google Home, when your Action completes audio playback,

Google Assistant checks if the media response is a FinalResponse.

If not, it sends a callback to your fulfillment, allowing you to respond to the

user.

Your Action must include suggestion chips if the

response is not a FinalResponse.

Handling callback after playback completion

Your Action should handle the actions.intent.MEDIA_STATUS intent to prompt

user for follow-up (for example, to play another song). Your Action receives

this callback once media playback is completed. In the callback, the

MEDIA_STATUS argument contains status information about the current media. The

status value will either be FINISHED or STATUS_UNSPECIFIED.

Using Dialogflow

If you want to perform conversational branching in Dialogflow, you'll need to

set up an input context of actions_capability_media_response_audio on the

intent to ensure it only triggers on surfaces that support a media response.

Building your fulfillment

The code snippet below shows how you might write the fulfillment code for your

Action. If you're using Dialogflow, replace actions.intent.MEDIA_STATUS

with the action name specified in the intent which receives the

actions_intent_MEDIA_STATUS event, (for example, "media.status.update").

Node.js

app.intent('Media Status', (conv) => { const mediaStatus = conv.arguments.get('MEDIA_STATUS'); let response = 'Unknown media status received.'; if (mediaStatus && mediaStatus.status === 'FINISHED') { response = 'Hope you enjoyed the tune!'; } conv.ask(response); conv.ask('Which response would you like to see next?'); });

Java

@ForIntent("Media Status") public ActionResponse mediaStatus(ActionRequest request) { ResponseBuilder responseBuilder = getResponseBuilder(request); String mediaStatus = request.getMediaStatus(); String response = "Unknown media status received."; if (mediaStatus != null && mediaStatus.equals("FINISHED")) { response = "Hope you enjoyed the tune!"; } responseBuilder.add(response); responseBuilder.add("Which response would you like to see next?"); return responseBuilder.build(); }

Node.js

app.intent('actions.intent.MEDIA_STATUS', (conv) => { const mediaStatus = conv.arguments.get('MEDIA_STATUS'); let response = 'Unknown media status received.'; if (mediaStatus && mediaStatus.status === 'FINISHED') { response = 'Hope you enjoyed the tune!'; } conv.ask(response); conv.ask('Which response would you like to see next?'); });

Java

@ForIntent("actions.intent.MEDIA_STATUS") public ActionResponse mediaStatus(ActionRequest request) { ResponseBuilder responseBuilder = getResponseBuilder(request); String mediaStatus = request.getMediaStatus(); String response = "Unknown media status received."; if (mediaStatus != null && mediaStatus.equals("FINISHED")) { response = "Hope you enjoyed the tune!"; } responseBuilder.add(response); responseBuilder.add("Which response would you like to see next?"); return responseBuilder.build(); }

JSON

Note that the JSON below describes a webhook request.

{ "responseId": "151b68df-98de-41fb-94b5-caeace90a7e9-21947381", "queryResult": { "queryText": "actions_intent_MEDIA_STATUS", "parameters": {}, "allRequiredParamsPresent": true, "fulfillmentText": "Webhook failed for intent: Media Status", "fulfillmentMessages": [ { "text": { "text": [ "Webhook failed for intent: Media Status" ] } } ], "outputContexts": [ { "name": "projects/df-responses-kohler/agent/sessions/ABwppHHsebncupHK11oKhsCTgyH96GRNYH-xpeeMTqb-cvOxbd67QenbRlZM4bGAIB8_KXdTfI7-7lYVKN1ovAhCaA/contexts/actions_capability_media_response_audio" }, { "name": "projects/df-responses-kohler/agent/sessions/ABwppHHsebncupHK11oKhsCTgyH96GRNYH-xpeeMTqb-cvOxbd67QenbRlZM4bGAIB8_KXdTfI7-7lYVKN1ovAhCaA/contexts/actions_capability_account_linking" }, { "name": "projects/df-responses-kohler/agent/sessions/ABwppHHsebncupHK11oKhsCTgyH96GRNYH-xpeeMTqb-cvOxbd67QenbRlZM4bGAIB8_KXdTfI7-7lYVKN1ovAhCaA/contexts/actions_capability_web_browser" }, { "name": "projects/df-responses-kohler/agent/sessions/ABwppHHsebncupHK11oKhsCTgyH96GRNYH-xpeeMTqb-cvOxbd67QenbRlZM4bGAIB8_KXdTfI7-7lYVKN1ovAhCaA/contexts/actions_capability_screen_output" }, { "name": "projects/df-responses-kohler/agent/sessions/ABwppHHsebncupHK11oKhsCTgyH96GRNYH-xpeeMTqb-cvOxbd67QenbRlZM4bGAIB8_KXdTfI7-7lYVKN1ovAhCaA/contexts/actions_capability_audio_output" }, { "name": "projects/df-responses-kohler/agent/sessions/ABwppHHsebncupHK11oKhsCTgyH96GRNYH-xpeeMTqb-cvOxbd67QenbRlZM4bGAIB8_KXdTfI7-7lYVKN1ovAhCaA/contexts/google_assistant_input_type_voice" }, { "name": "projects/df-responses-kohler/agent/sessions/ABwppHHsebncupHK11oKhsCTgyH96GRNYH-xpeeMTqb-cvOxbd67QenbRlZM4bGAIB8_KXdTfI7-7lYVKN1ovAhCaA/contexts/actions_intent_media_status", "parameters": { "MEDIA_STATUS": { "@type": "type.googleapis.com/google.actions.v2.MediaStatus", "status": "FINISHED" } } } ], "intent": { "name": "projects/df-responses-kohler/agent/intents/068b27d3-c148-4044-bfab-dfa37eebd90d", "displayName": "Media Status" }, "intentDetectionConfidence": 1, "languageCode": "en" }, "originalDetectIntentRequest": { "source": "google", "version": "2", "payload": { "user": { "locale": "en-US", "lastSeen": "2019-08-04T23:57:15Z", "userVerificationStatus": "VERIFIED" }, "conversation": { "conversationId": "ABwppHHsebncupHK11oKhsCTgyH96GRNYH-xpeeMTqb-cvOxbd67QenbRlZM4bGAIB8_KXdTfI7-7lYVKN1ovAhCaA", "type": "ACTIVE", "conversationToken": "[]" }, "inputs": [ { "intent": "actions.intent.MEDIA_STATUS", "rawInputs": [ { "inputType": "VOICE" } ], "arguments": [ { "name": "MEDIA_STATUS", "extension": { "@type": "type.googleapis.com/google.actions.v2.MediaStatus", "status": "FINISHED" } } ] } ], "surface": { "capabilities": [ { "name": "actions.capability.MEDIA_RESPONSE_AUDIO" }, { "name": "actions.capability.ACCOUNT_LINKING" }, { "name": "actions.capability.WEB_BROWSER" }, { "name": "actions.capability.SCREEN_OUTPUT" }, { "name": "actions.capability.AUDIO_OUTPUT" } ] }, "isInSandbox": true, "availableSurfaces": [ { "capabilities": [ { "name": "actions.capability.WEB_BROWSER" }, { "name": "actions.capability.AUDIO_OUTPUT" }, { "name": "actions.capability.SCREEN_OUTPUT" } ] } ], "requestType": "SIMULATOR" } }, "session": "projects/df-responses-kohler/agent/sessions/ABwppHHsebncupHK11oKhsCTgyH96GRNYH-xpeeMTqb-cvOxbd67QenbRlZM4bGAIB8_KXdTfI7-7lYVKN1ovAhCaA" }

JSON

Note that the JSON below describes a webhook request.

{ "user": { "locale": "en-US", "lastSeen": "2019-08-06T07:38:40Z", "userVerificationStatus": "VERIFIED" }, "conversation": { "conversationId": "ABwppHGcqunXh1M6IE0lu2sVqXdpJfdpC5FWMkMSXQskK1nzb4IkSUSRqQzoEr0Ly0z_G3mwyZlk5rFtd1w", "type": "NEW" }, "inputs": [ { "intent": "actions.intent.MEDIA_STATUS", "rawInputs": [ { "inputType": "VOICE" } ], "arguments": [ { "name": "MEDIA_STATUS", "extension": { "@type": "type.googleapis.com/google.actions.v2.MediaStatus", "status": "FINISHED" } } ] } ], "surface": { "capabilities": [ { "name": "actions.capability.SCREEN_OUTPUT" }, { "name": "actions.capability.WEB_BROWSER" }, { "name": "actions.capability.AUDIO_OUTPUT" }, { "name": "actions.capability.MEDIA_RESPONSE_AUDIO" }, { "name": "actions.capability.ACCOUNT_LINKING" } ] }, "isInSandbox": true, "availableSurfaces": [ { "capabilities": [ { "name": "actions.capability.WEB_BROWSER" }, { "name": "actions.capability.AUDIO_OUTPUT" }, { "name": "actions.capability.SCREEN_OUTPUT" } ] } ], "requestType": "SIMULATOR" }

Table cards

Table cards allow you to display tabular data in your response (for example, sports standings, election results, and flights). You can define columns and rows (up to 3 each) that the Assistant is required to show in your table card. You can also define additional columns and rows along with their prioritization.

Tables are different than vertical lists because tables display static data and are not interactable, like list elements.

Properties

Table cards have the following requirements and optional properties that you can configure:

- Supported on surfaces with the

actions.capability.SCREEN_OUTPUTcapability.

The following section summarizes how you can customize the elements in a table card.

| Name | Is Optional | Is Customizable | Customization Notes |

|---|---|---|---|

title |

Yes | Yes | Overall title of the table. Must be set if subtitle is set. You can customize the font family and color. |

subtitle |

Yes | No | Subtitle for the table. |

image |

Yes | Yes | Image associated with the table. |

Row |

No | Yes |

Row data of the table. Consists of an array of The first 3 rows are guaranteed to be shown, but others might not appear on certain surfaces. Please test with the simulator to see which rows are shown for a

given surface. On surfaces that support the |

ColumnProperties |

Yes | Yes | Header and alignment for a column. Consists of a header

property (representing the header text for a column) and a

horizontal_alignment property (of type

HorizontalAlignment). |

Cell |

No | Yes | Describes a cell in a row. Each cell contains a string representing a text value. You can customize the text in the cell. |

Button |

Yes | Yes | A button object that usually appears at the bottom of a card. A table card can only have 1 button. You can customize the button color. |

HorizontalAlignment |

Yes | Yes | Horizontal alignment of content within the cell. Values can be

LEADING, CENTER, or TRAILING. If

unspecified, content is aligned to the leading edge of the cell. |

Sample code

The following snippets show how to implement a simple table card:

Node.js

app.intent('Simple Table Card', (conv) => { if (!conv.screen) { conv.ask('Sorry, try this on a screen device or select the ' + 'phone surface in the simulator.'); conv.ask('Which response would you like to see next?'); return; } conv.ask('This is a simple table example.'); conv.ask(new Table({ dividers: true, columns: ['header 1', 'header 2', 'header 3'], rows: [ ['row 1 item 1', 'row 1 item 2', 'row 1 item 3'], ['row 2 item 1', 'row 2 item 2', 'row 2 item 3'], ], })); conv.ask('Which response would you like to see next?'); });

Java

@ForIntent("Simple Table Card") public ActionResponse simpleTable(ActionRequest request) { ResponseBuilder responseBuilder = getResponseBuilder(request); if (!request.hasCapability(Capability.SCREEN_OUTPUT.getValue())) { return responseBuilder .add("Sorry, try ths on a screen device or select the phone surface in the simulator.") .add("Which response would you like to see next?") .build(); } responseBuilder .add("This is a simple table example.") .add( new TableCard() .setColumnProperties( Arrays.asList( new TableCardColumnProperties().setHeader("header 1"), new TableCardColumnProperties().setHeader("header 2"), new TableCardColumnProperties().setHeader("header 3"))) .setRows( Arrays.asList( new TableCardRow() .setCells( Arrays.asList( new TableCardCell().setText("row 1 item 1"), new TableCardCell().setText("row 1 item 2"), new TableCardCell().setText("row 1 item 3"))), new TableCardRow() .setCells( Arrays.asList( new TableCardCell().setText("row 2 item 1"), new TableCardCell().setText("row 2 item 2"), new TableCardCell().setText("row 2 item 3")))))); return responseBuilder.build(); }

Node.js

if (!conv.screen) { conv.ask('Sorry, try this on a screen device or select the ' + 'phone surface in the simulator.'); conv.ask('Which response would you like to see next?'); return; } conv.ask('This is a simple table example.'); conv.ask(new Table({ dividers: true, columns: ['header 1', 'header 2', 'header 3'], rows: [ ['row 1 item 1', 'row 1 item 2', 'row 1 item 3'], ['row 2 item 1', 'row 2 item 2', 'row 2 item 3'], ], })); conv.ask('Which response would you like to see next?');

Java

ResponseBuilder responseBuilder = getResponseBuilder(request); if (!request.hasCapability(Capability.SCREEN_OUTPUT.getValue())) { return responseBuilder .add("Sorry, try ths on a screen device or select the phone surface in the simulator.") .add("Which response would you like to see next?") .build(); } responseBuilder .add("This is a simple table example.") .add( new TableCard() .setColumnProperties( Arrays.asList( new TableCardColumnProperties().setHeader("header 1"), new TableCardColumnProperties().setHeader("header 2"), new TableCardColumnProperties().setHeader("header 3"))) .setRows( Arrays.asList( new TableCardRow() .setCells( Arrays.asList( new TableCardCell().setText("row 1 item 1"), new TableCardCell().setText("row 1 item 2"), new TableCardCell().setText("row 1 item 3"))), new TableCardRow() .setCells( Arrays.asList( new TableCardCell().setText("row 2 item 1"), new TableCardCell().setText("row 2 item 2"), new TableCardCell().setText("row 2 item 3")))))); return responseBuilder.build();

JSON

Note that the JSON below describes a webhook response.

{ "payload": { "google": { "expectUserResponse": true, "richResponse": { "items": [ { "simpleResponse": { "textToSpeech": "This is a simple table example." } }, { "tableCard": { "rows": [ { "cells": [ { "text": "row 1 item 1" }, { "text": "row 1 item 2" }, { "text": "row 1 item 3" } ], "dividerAfter": true }, { "cells": [ { "text": "row 2 item 1" }, { "text": "row 2 item 2" }, { "text": "row 2 item 3" } ], "dividerAfter": true } ], "columnProperties": [ { "header": "header 1" }, { "header": "header 2" }, { "header": "header 3" } ] } }, { "simpleResponse": { "textToSpeech": "Which response would you like to see next?" } } ] } } } }

JSON

Note that the JSON below describes a webhook response.

{ "expectUserResponse": true, "expectedInputs": [ { "inputPrompt": { "richInitialPrompt": { "items": [ { "simpleResponse": { "textToSpeech": "This is a simple table example." } }, { "tableCard": { "columnProperties": [ { "header": "header 1" }, { "header": "header 2" }, { "header": "header 3" } ], "rows": [ { "cells": [ { "text": "row 1 item 1" }, { "text": "row 1 item 2" }, { "text": "row 1 item 3" } ], "dividerAfter": true }, { "cells": [ { "text": "row 2 item 1" }, { "text": "row 2 item 2" }, { "text": "row 2 item 3" } ], "dividerAfter": true } ] } }, { "simpleResponse": { "textToSpeech": "Which response would you like to see next?" } } ] } }, "possibleIntents": [ { "intent": "actions.intent.TEXT" } ] } ] }

The following snippets show how to implement a complex table card:

Node.js

app.intent('Advanced Table Card', (conv) => { if (!conv.screen) { conv.ask('Sorry, try this on a screen device or select the ' + 'phone surface in the simulator.'); conv.ask('Which response would you like to see next?'); return; } conv.ask('This is a table with all the possible fields.'); conv.ask(new Table({ title: 'Table Title', subtitle: 'Table Subtitle', image: new Image({ url: 'https://storage.googleapis.com/actionsresources/logo_assistant_2x_64dp.png', alt: 'Alt Text', }), columns: [ { header: 'header 1', align: 'CENTER', }, { header: 'header 2', align: 'LEADING', }, { header: 'header 3', align: 'TRAILING', }, ], rows: [ { cells: ['row 1 item 1', 'row 1 item 2', 'row 1 item 3'], dividerAfter: false, }, { cells: ['row 2 item 1', 'row 2 item 2', 'row 2 item 3'], dividerAfter: true, }, { cells: ['row 3 item 1', 'row 3 item 2', 'row 3 item 3'], }, ], buttons: new Button({ title: 'Button Text', url: 'https://assistant.google.com', }), })); conv.ask('Which response would you like to see next?'); });

Java

@ForIntent("Advanced Table Card") public ActionResponse advancedTable(ActionRequest request) { ResponseBuilder responseBuilder = getResponseBuilder(request); if (!request.hasCapability(Capability.SCREEN_OUTPUT.getValue())) { return responseBuilder .add("Sorry, try ths on a screen device or select the phone surface in the simulator.") .add("Which response would you like to see next?") .build(); } responseBuilder .add("This is a table with all the possible fields.") .add( new TableCard() .setTitle("Table Title") .setSubtitle("Table Subtitle") .setImage( new Image() .setUrl( "https://storage.googleapis.com/actionsresources/logo_assistant_2x_64dp.png") .setAccessibilityText("Alt text")) .setButtons( Arrays.asList( new Button() .setTitle("Button Text") .setOpenUrlAction( new OpenUrlAction().setUrl("https://assistant.google.com")))) .setColumnProperties( Arrays.asList( new TableCardColumnProperties() .setHeader("header 1") .setHorizontalAlignment("CENTER"), new TableCardColumnProperties() .setHeader("header 2") .setHorizontalAlignment("LEADING"), new TableCardColumnProperties() .setHeader("header 3") .setHorizontalAlignment("TRAILING"))) .setRows( Arrays.asList( new TableCardRow() .setCells( Arrays.asList( new TableCardCell().setText("row 1 item 1"), new TableCardCell().setText("row 1 item 2"), new TableCardCell().setText("row 1 item 3"))) .setDividerAfter(false), new TableCardRow() .setCells( Arrays.asList( new TableCardCell().setText("row 2 item 1"), new TableCardCell().setText("row 2 item 2"), new TableCardCell().setText("row 2 item 3"))) .setDividerAfter(true), new TableCardRow() .setCells( Arrays.asList( new TableCardCell().setText("row 2 item 1"), new TableCardCell().setText("row 2 item 2"), new TableCardCell().setText("row 2 item 3")))))); return responseBuilder.build(); }

Node.js

if (!conv.screen) { conv.ask('Sorry, try this on a screen device or select the ' + 'phone surface in the simulator.'); conv.ask('Which response would you like to see next?'); return; } conv.ask('This is a table with all the possible fields.'); conv.ask(new Table({ title: 'Table Title', subtitle: 'Table Subtitle', image: new Image({ url: 'https://storage.googleapis.com/actionsresources/logo_assistant_2x_64dp.png', alt: 'Alt Text', }), columns: [ { header: 'header 1', align: 'CENTER', }, { header: 'header 2', align: 'LEADING', }, { header: 'header 3', align: 'TRAILING', }, ], rows: [ { cells: ['row 1 item 1', 'row 1 item 2', 'row 1 item 3'], dividerAfter: false, }, { cells: ['row 2 item 1', 'row 2 item 2', 'row 2 item 3'], dividerAfter: true, }, { cells: ['row 3 item 1', 'row 3 item 2', 'row 3 item 3'], }, ], buttons: new Button({ title: 'Button Text', url: 'https://assistant.google.com', }), })); conv.ask('Which response would you like to see next?');

Java

ResponseBuilder responseBuilder = getResponseBuilder(request); if (!request.hasCapability(Capability.SCREEN_OUTPUT.getValue())) { return responseBuilder .add("Sorry, try ths on a screen device or select the phone surface in the simulator.") .add("Which response would you like to see next?") .build(); } responseBuilder .add("This is a table with all the possible fields.") .add( new TableCard() .setTitle("Table Title") .setSubtitle("Table Subtitle") .setImage( new Image() .setUrl( "https://storage.googleapis.com/actionsresources/logo_assistant_2x_64dp.png") .setAccessibilityText("Alt text")) .setButtons( Arrays.asList( new Button() .setTitle("Button Text") .setOpenUrlAction( new OpenUrlAction().setUrl("https://assistant.google.com")))) .setColumnProperties( Arrays.asList( new TableCardColumnProperties() .setHeader("header 1") .setHorizontalAlignment("CENTER"), new TableCardColumnProperties() .setHeader("header 2") .setHorizontalAlignment("LEADING"), new TableCardColumnProperties() .setHeader("header 3") .setHorizontalAlignment("TRAILING"))) .setRows( Arrays.asList( new TableCardRow() .setCells( Arrays.asList( new TableCardCell().setText("row 1 item 1"), new TableCardCell().setText("row 1 item 2"), new TableCardCell().setText("row 1 item 3"))) .setDividerAfter(false), new TableCardRow() .setCells( Arrays.asList( new TableCardCell().setText("row 2 item 1"), new TableCardCell().setText("row 2 item 2"), new TableCardCell().setText("row 2 item 3"))) .setDividerAfter(true), new TableCardRow() .setCells( Arrays.asList( new TableCardCell().setText("row 2 item 1"), new TableCardCell().setText("row 2 item 2"), new TableCardCell().setText("row 2 item 3")))))); return responseBuilder.build();

JSON

Note that the JSON below describes a webhook response.

{ "payload": { "google": { "expectUserResponse": true, "richResponse": { "items": [ { "simpleResponse": { "textToSpeech": "This is a table with all the possible fields." } }, { "tableCard": { "title": "Table Title", "subtitle": "Table Subtitle", "image": { "url": "https://storage.googleapis.com/actionsresources/logo_assistant_2x_64dp.png", "accessibilityText": "Alt Text" }, "rows": [ { "cells": [ { "text": "row 1 item 1" }, { "text": "row 1 item 2" }, { "text": "row 1 item 3" } ], "dividerAfter": false }, { "cells": [ { "text": "row 2 item 1" }, { "text": "row 2 item 2" }, { "text": "row 2 item 3" } ], "dividerAfter": true }, { "cells": [ { "text": "row 3 item 1" }, { "text": "row 3 item 2" }, { "text": "row 3 item 3" } ] } ], "columnProperties": [ { "header": "header 1", "horizontalAlignment": "CENTER" }, { "header": "header 2", "horizontalAlignment": "LEADING" }, { "header": "header 3", "horizontalAlignment": "TRAILING" } ], "buttons": [ { "title": "Button Text", "openUrlAction": { "url": "https://assistant.google.com" } } ] } }, { "simpleResponse": { "textToSpeech": "Which response would you like to see next?" } } ] } } } }

JSON

Note that the JSON below describes a webhook response.

{ "expectUserResponse": true, "expectedInputs": [ { "possibleIntents": [ { "intent": "actions.intent.TEXT" } ], "inputPrompt": { "richInitialPrompt": { "items": [ { "simpleResponse": { "textToSpeech": "This is a table with all the possible fields." } }, { "tableCard": { "title": "Table Title", "subtitle": "Table Subtitle", "image": { "url": "https://storage.googleapis.com/actionsresources/logo_assistant_2x_64dp.png", "accessibilityText": "Alt Text" }, "rows": [ { "cells": [ { "text": "row 1 item 1" }, { "text": "row 1 item 2" }, { "text": "row 1 item 3" } ], "dividerAfter": false }, { "cells": [ { "text": "row 2 item 1" }, { "text": "row 2 item 2" }, { "text": "row 2 item 3" } ], "dividerAfter": true }, { "cells": [ { "text": "row 3 item 1" }, { "text": "row 3 item 2" }, { "text": "row 3 item 3" } ] } ], "columnProperties": [ { "header": "header 1", "horizontalAlignment": "CENTER" }, { "header": "header 2", "horizontalAlignment": "LEADING" }, { "header": "header 3", "horizontalAlignment": "TRAILING" } ], "buttons": [ { "title": "Button Text", "openUrlAction": { "url": "https://assistant.google.com" } } ] } }, { "simpleResponse": { "textToSpeech": "Which response would you like to see next?" } } ] } } } ] }

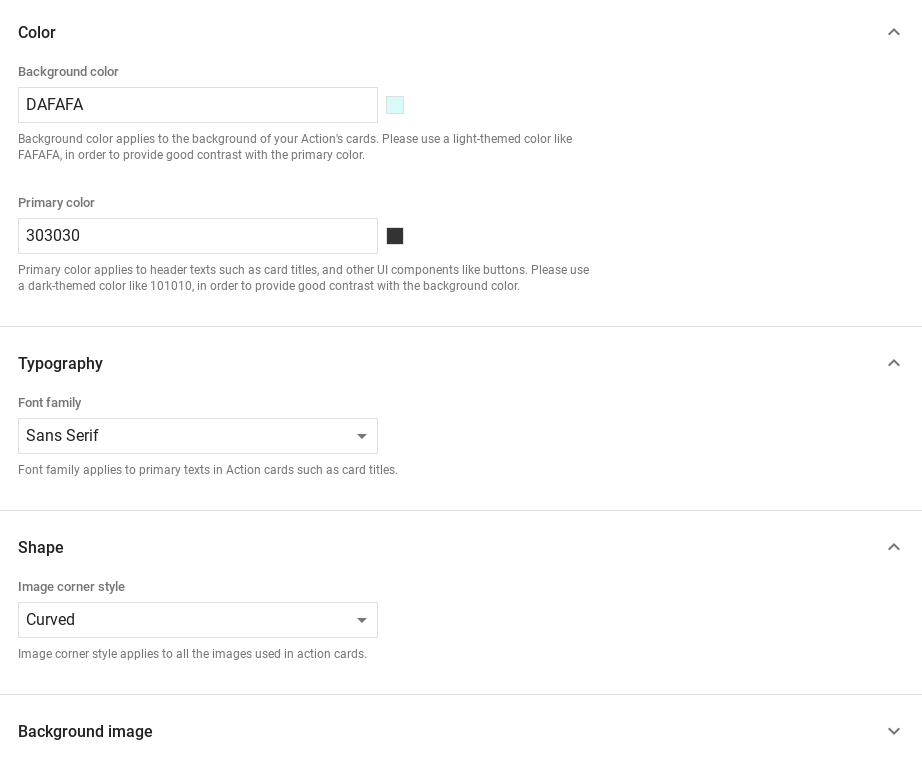

Customizing your responses

You can change the appearance of your rich responses by creating a custom theme. If you define a theme for your Actions project, rich responses across your project's Actions will be styled according to your theme. This custom branding can be useful for defining a unique look and feel to the conversation when users invoke your Actions on a surface with a screen.

To set a custom response theme, do the following:

- In the Actions console, go to Develop > Theme customization.

- Set any or all of the following:

- Background color to be used as the background of your cards. In general, you should use a light color for the background so the card's content is easy to read.

- Primary color is the main color for your cards' header texts and UI elements. In general, you should use a darker primary color to contrast with the background.

- Font family describes the type of font used for titles and other prominent text elements.

- Image corner style can alter the look of your cards' corners.

- Background image uses a custom image in place of the background color. You'll need to provide two different images for when the surface device is in landscape or portrait mode, respectively. Note that if you use a background image, the primary color is set to white.

- Click Save.