Schedule an Oracle transfer

The BigQuery Data Transfer Service for Oracle connector lets you automatically schedule and manage recurring load jobs from Oracle into BigQuery.

Limitations

Oracle transfers are subject to the following limitations:

- The maximum number of simultaneous connections to an Oracle database is limited, and as a result, the number of simultaneous transfer runs to a single Oracle database is limited to that maximum amount.

- You must set up a network attachment in cases where a public IP is not available for an Oracle database connection, with the following requirements:

- The data source must be accessible from the subnet where the network attachment resides.

- The network attachment must not be in the subnet within the range

240.0.0.0/24. - Network attachments cannot be deleted if there are active connections to the attachment. To delete a network attachment, contact Cloud Customer Care.

- For the

usmulti-region, the network attachment must be in theus-central1region. For theeumulti-region, the network attachment must be in theeurope-west4region.

- The Google Cloud console only supports the use of the

NORMALOracle user role to connector Oracle to the BigQuery Data Transfer Service. You must use the BigQuery CLI to connect using theSYSDBAandSYSOPEROracle user roles. - The minimum interval time between recurring Oracle transfers is 15 minutes. The default interval for a recurring transfer is 24 hours.

Before you begin

The following sections describe the steps that you need to take before you create an Oracle transfer.

Oracle prerequisites

- Create a User credential in the Oracle database.

- Grant

Create Sessionsystem privileges to the user to allow session creation. - Assign a tablespace to the user account.

You must also have the following Oracle database information when creating an Oracle transfer.

| Parameter Name | Description |

|---|---|

database |

Name of the database. |

host |

Hostname or IP address of the database. |

port |

Port number of the database. |

username |

Username to access the database. |

password |

Password to access the database. |

connectionType |

The connection type. This can be |

oracleObjects |

List of Oracle objects to transfer. |

BigQuery prerequisites

- Verify that you have completed all actions required to enable the BigQuery Data Transfer Service.

- Create a BigQuery dataset to store your data.

- If you intend to set up transfer run notifications for Pub/Sub,

ensure that you have the

pubsub.topics.setIamPolicyIdentity and Access Management (IAM) permission. Pub/Sub permissions are not required if you only set up email notifications. For more information, see BigQuery Data Transfer Service run notifications.

Required BigQuery roles

To get the permissions that you need to create a transfer,

ask your administrator to grant you the

BigQuery Admin (roles/bigquery.admin) IAM role.

For more information about granting roles, see Manage access.

This predefined role contains the permissions required to create a transfer. To see the exact permissions that are required, expand the Required permissions section:

Required permissions

The following permissions are required to create a transfer:

-

bigquery.transfers.updateon the user -

bigquery.datasets.geton the target dataset -

bigquery.datasets.updateon the target dataset

You might also be able to get these permissions with custom roles or other predefined roles.

Set up an Oracle data transfer

Select one of the following options:

Console

In the Google Cloud console, go to the BigQuery page.

Click Data transfers > Create a transfer.

In the Source type section, for Source, select Oracle.

In the Transfer config name section, for Display name, enter a name for the transfer.

In the Schedule options section:

In the Repeat frequency list, select an option to specify how often this transfer runs. To specify a custom repeat frequency, select Custom. If you select On-demand, then this transfer runs when you manually trigger the transfer.

If applicable, select either Start now or Start at set time and provide a start date and run time.

In the Destination settings section, for Dataset, select the dataset you created to store your data.

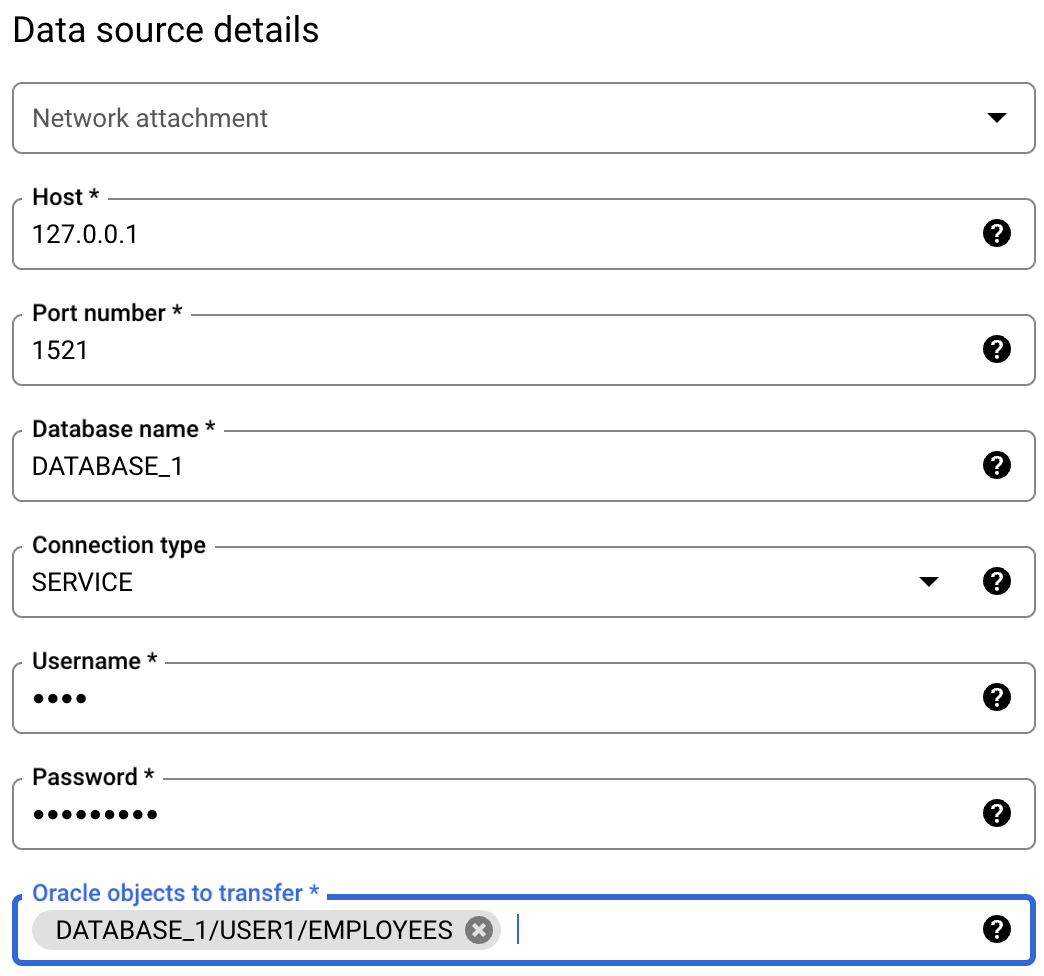

In the Data source details section, do the following:

- For Network attachment, select an existing network attachment or click Create Network Attachment.

- For Host, enter the hostname or IP of the database.

- For Port, enter the port number that the Oracle

database is using for incoming connections, such as

1520. - For Database name, enter the name of the Oracle database.

- For Connection type, enter the connection URL type, either

SERVICE,SID, orTNS. - For Username, enter the username of the user initiating the Oracle database connection.

- For Password, enter the password of the user initiating the Oracle database connection.

For Oracle objects to transfer, click BROWSE to select any tables to be transferred to the BigQuery destination dataset.

- You can also manually enter any objects to include in the transfer in this field.

In the Service Account menu, select a service account associated with your Google Cloud project. The selected service account must have the required roles to run this transfer.

If you signed in with a federated identity, then a service account is required to create a transfer. If you signed in with a Google Account, then a service account for the transfer is optional.

For more information about using service accounts with data transfers, see Use service accounts.

Optional: In the Notification options section, do the following:

- To enable email notifications, click the Email notification toggle. When you enable this option, the transfer administrator receives an email notification when a transfer run fails.

- To enable Pub/Sub transfer run notifications for this transfer, click the Pub/Sub notifications toggle. You can select your topic name, or you can click Create a topic to create one.

Click Save.

bq

Enter the bq mk command

and supply the transfer creation flag

--transfer_config:

bq mk \

--transfer_config \

--project_id=PROJECT_ID \

--data_source=DATA_SOURCE \

--display_name=DISPLAY_NAME \

--target_dataset=DATASET \

--params='PARAMETERS'

Where:

- PROJECT_ID (optional): your Google Cloud project ID.

If

--project_idisn't supplied to specify a particular project, the default project is used. - DATA_SOURCE: the data source —

oracle. - DISPLAY_NAME: the display name for the transfer configuration. The transfer name can be any value that lets you identify the transfer if you need to modify it later.

- DATASET: the target dataset for the transfer configuration.

PARAMETERS: the parameters for the created transfer configuration in JSON format. For example:

--params='{"param":"param_value"}'. The following are the parameters for an Oracle transfer:connector.networkAttachment(optional): name of the network attachment to connect to the Oracle database.connector.authentication.Username: username of the Oracle account.connector.authentication.Password: password of the Oracle account.connector.database: name of the Oracle database.connector.endpoint.host: the hostname or IP of the database.connector.endpoint.port: the port number that the Oracle database is using for incoming connections, such as1520.connector.connectionType: the connection URL type, eitherSERVICE,SID, orTNS.assets: the path to the Oracle objects to be transferred to BigQuery, using the format:DATABASE_NAME/SCHEMA_NAME/TABLE_NAME

For example, the following command creates an Oracle transfer in the default project with all the required parameters:

bq mk \

--transfer_config \

--target_dataset=mydataset \

--data_source=oracle \

--display_name='My Transfer' \

--params='{"assets":["DB1/USER1/DEPARTMENT","DB1/USER1/EMPLOYEES"], \

"connector.authentication.username": "User1", \

"connector.authentication.password":"ABC12345", \

"connector.database":"DB1", \

"Connector.endpoint.host":"192.168.0.1", \

"Connector.endpoint.port":"1520", \

"connector.connectionType":"SERVICE", \

"connector.networkAttachment": \

"projects/dev-project1/regions/us-central1/networkattachments/na1"}'

API

Use the projects.locations.transferConfigs.create

method and supply an instance of the TransferConfig

resource.

Troubleshoot transfer setup

If you are having issues setting up your transfer, see Oracle transfer issues.

Pricing

There is no cost to transfer Oracle data into BigQuery while this feature is in Preview.

What's next

- For an overview of the BigQuery Data Transfer Service, see Introduction to BigQuery Data Transfer Service.

- For information on using transfers including getting information about a transfer configuration, listing transfer configurations, and viewing a transfer's run history, see Working with transfers.

- Learn how to load data with cross-cloud operations.