PageSpeed Insights (PSI) fornisce report sull'esperienza utente di una pagina su dispositivi mobili e computer e offre suggerimenti su come migliorarla.

PSI fornisce sia dati di laboratorio che sul campo relativi a una pagina. I dati di laboratorio sono utili per eseguire il debug dei problemi, in quanto vengono raccolti in un ambiente controllato. Tuttavia, potrebbe non identificare i colli di bottiglia reali. I dati sul campo sono utili per acquisire un'esperienza utente reale, ma hanno un insieme di metriche più limitato. Per saperne di più sui due tipi di dati, consulta Informazioni su come interpretare gli strumenti di misurazione della velocità.

Dati sull'esperienza utente reale

I dati sull'esperienza utente reale in PSI si basano sul set di dati del Rapporto sull'esperienza utente di Chrome (CrUX). PSI registra le esperienze degli utenti reali relative a First Contentful Paint (FCP), Interaction to Next Paint (INP), Largest Contentful Paint (LCP) e Cumulative Layout Shift (CLS) nel periodo di raccolta precedente di 28 giorni. PSI segnala inoltre esperienze per la metrica sperimentale Time to First Byte (TTFB).

Per mostrare i dati sull'esperienza utente per una determinata pagina, devono essere disponibili dati sufficienti per includerla nel set di dati CrUX. Una pagina potrebbe non disporre di dati sufficienti se è stata pubblicato di recente o che non include pochi esempi da utenti reali. In questo caso, la PSI tornerà alla granularità a livello di origine, che comprende tutte le esperienze utente su tutte le pagine del sito web. A volte anche l'origine potrebbe non avere dati sufficienti, nel qual caso PSI non potrà mostrare dati sull'esperienza utente reale.

Valutazione della qualità delle esperienze

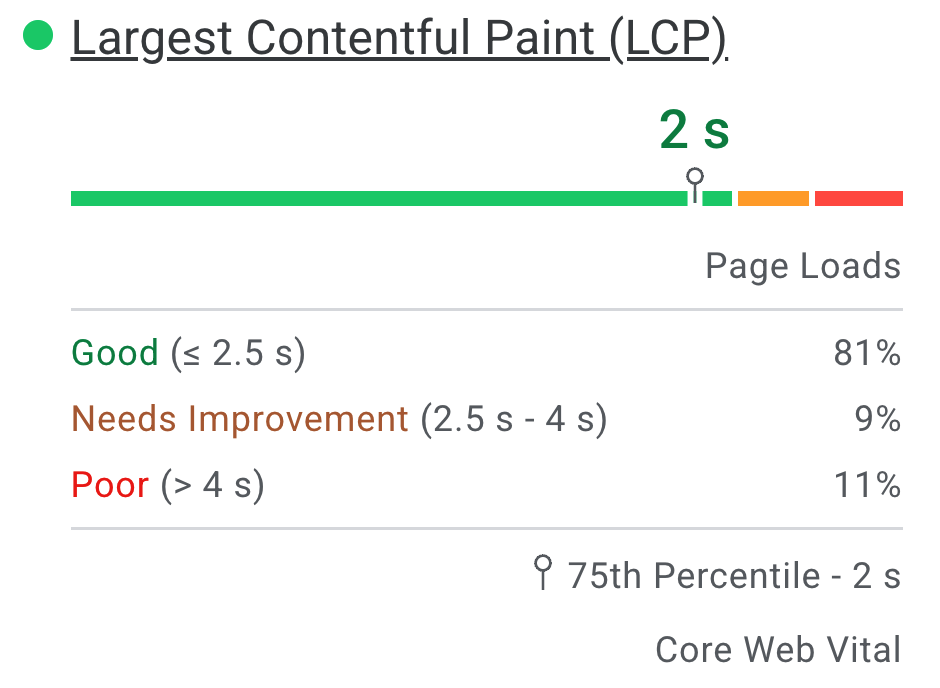

PSI classifica la qualità delle esperienze utente in tre categorie: Buona, Richiede miglioramenti, o Scadente. PSI imposta le seguenti soglie in linea con la Iniziativa Web Vitals:

| Buono | Richiede miglioramenti | Scadente | |

|---|---|---|---|

| FCP | [0, 1800ms] | (1800ms, 3000ms] | più di 3000 ms |

| LCP | [0, 2500ms] | [2500 ms, 4000 ms] | più di 4000 ms |

| CLS | [0, 0,1] | (0,1; 0,25] | più di 0,25 |

| INP | [0, 200ms] | [200 ms, 500 ms] | più di 500 ms |

| TTFB (sperimentale) | [0.800 ms] | (800 ms, 1800 ms] | Più di 1800 ms |

Distribuzione e valori delle metriche selezionate

Il PSI presenta una distribuzione di queste metriche in modo che gli sviluppatori possano comprendere l'intervallo di esperienze per quella pagina o origine. Questa distribuzione è suddivisa in tre categorie: Buono, Richiede miglioramenti e Scadente, rappresentate da barre verdi, ambrate e rosse. Ad esempio, se nella barra ambra LCP viene visualizzato l'11%, significa che l'11% di tutti i valori LCP osservati rientra tra 2500 e 4000 ms.

Sopra le barre di distribuzione, PSI riporta il 75° percentile per tutte le metriche. Il 75° percentile è selezionato in modo che gli sviluppatori possano comprendere le esperienze utente più frustranti sul loro sito. I valori delle metriche dei campi sono classificati come bene/devono migliorare/scarso applicando le stesse soglie indicate sopra.

Segnali web essenziali

I Core Web Vitals sono un insieme comune di indicatori di prestazioni, fondamentali per tutte le esperienze web. Le metriche Core Web Vitals sono INP, LCP e CLS e potrebbero essere aggregate a livello di pagina o di origine. Per le aggregazioni con dati sufficienti in tutto tre metriche, l'aggregazione supera la valutazione Core Web Vitals se il 75° percentile di tutte e tre le metriche è Buono. In caso contrario, l'aggregazione non supera la valutazione. Se l'aggregazione non contiene dati sufficienti per l'INP, supera la valutazione se entrambi i 75° percentile di LCP e CLS sono buoni. Se i dati di LCP o CLS non sono sufficienti, non è possibile valutare l'aggregazione a livello di pagina o di origine.

Differenze tra i dati sul campo in PSI e in CrUX

La differenza tra i dati dei campi in PSI e quelli del set di dati CrUX su BigQuery è che i dati di PSI vengono aggiornati quotidianamente, mentre il set di dati BigQuery viene aggiornato mensilmente ed è limitato ai dati a livello di origine. Entrambe le origini dati rappresentano periodi degli ultimi 28 giorni.

Diagnostica del lab

PSI utilizza Lighthouse per analizzare l'URL specificato in una per le categorie Rendimento, Accessibilità, Best practice e SEO.

Punteggio

Nella parte superiore della sezione sono riportati i punteggi per ogni categoria, determinati eseguendo l'esecuzione di Lighthouse per raccogliere e analizzare informazioni diagnostiche sulla pagina. Un punteggio pari o superiore a 90 è considerato buono. Un punteggio compreso tra 50 e 89 indica che il video ha bisogno di miglioramenti, mentre un punteggio inferiore a 50 è considerato scarso.

Metriche

La categoria Rendimento mostra anche il rendimento della pagina in base a diverse metriche, tra cui: First Contentful Paint, Largest Contentful Paint, Speed Index, Spostamento cumulativo del layout, Tempo all'interattività e Tempo di blocco totale.

A ogni metrica viene assegnato un punteggio ed è etichettata con un'icona:

- Buono, indicato da un cerchio verde

- La funzionalità Richiede miglioramenti è indicata con un quadrato informativo color ambra

- La qualità scadente è indicata da un triangolo di avviso rosso

Controlli

All'interno di ogni categoria sono presenti controlli che forniscono informazioni su come migliorare gli utenti della pagina un'esperienza senza intervento manuale. Consulta la documentazione di Lighthouse per una suddivisione dettagliata dei controlli di ogni categoria.

Domande frequenti

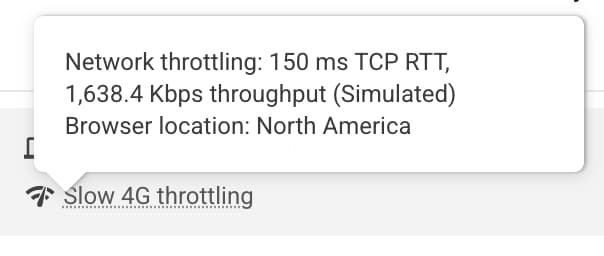

Quali condizioni del dispositivo e della rete utilizza Lighthouse per simulare un caricamento pagina?

Attualmente, Lighthouse simula le condizioni di caricamento della pagina di un dispositivo di fascia media (Moto G4) su una rete mobile per i dispositivi mobili e un computer simulato con una connessione cablata per i computer. PageSpeed viene eseguito anche in che può variare in base alle condizioni della rete, puoi controllare l'ubicazione ha esaminato il blocco ambientale del report Lighthouse:

Nota: PageSpeed segnala l'esecuzione in una delle seguenti regioni: Nord America, Europa o Asia.

Perché a volte i dati sul campo e i dati di laboratorio si contraddicono?

I dati sul campo sono un report storico sul rendimento di un determinato URL e rappresentano dati anonimizzati sul rendimento degli utenti reali su vari dispositivi e condizioni di rete. I dati di laboratorio si basano su un caricamento simulato di una pagina su un singolo dispositivo e su un insieme fisso di condizioni di rete. Di conseguenza, i valori possono differire. Consulta Perché i dati di prova controllati e reali possono essere diversi (e cosa fare) per ulteriori informazioni.

Perché viene scelto il 75° percentile per tutte le metriche?

Il nostro obiettivo è garantire che le pagine funzionino bene per la maggior parte degli utenti. Concentrandoci sui valori del 75° percentile per le nostre metriche, garantiamo che le pagine offrano una buona esperienza utente nelle condizioni più difficili per i dispositivi e le reti. Consulta Definire le soglie delle metriche Core Web Vitals per ulteriori informazioni.

Qual è un buon punteggio per i dati di laboratorio?

Qualsiasi punteggio verde (90 o superiore) è considerato buono, ma tieni presente che avere dati di laboratorio buoni non significa necessariamente che anche le esperienze degli utenti reali saranno buone.

Perché il punteggio del rendimento cambia da una pubblicazione all'altra? Non ho modificato nulla sulla mia pagina.

La variabilità nella misurazione del rendimento viene introdotta tramite una diversi canali con diversi livelli di impatto. Diverse fonti comuni di metriche Le variabilità sono la disponibilità di rete locale, la disponibilità dell'hardware client e le risorse conflittuale.

Perché i dati CrUX dell'utente reale non sono disponibili per un URL o un'origine?

Il Report sull'esperienza utente di Chrome aggrega dati sulla velocità reali da utenti che hanno attivato l'opzione e richiede che un URL sia pubblico (sottoponibile a scansione e indicizzabile) e disporre di un numero sufficiente di campioni distinti che forniscano una visualizzazione anonimizzata e rappresentativa. delle prestazioni dell'URL o dell'origine.

Altri dubbi?

Se hai una domanda specifica e pertinente su come utilizzare PageSpeed Insights, ponila in inglese su Stack Overflow.

Se hai domande o feedback generici su PageSpeed Insights o vuoi avviare una discussione generale, avvia un thread nella mailing list.

Se hai domande generali sulle metriche Web Vitals, avvia un thread nel gruppo di discussione web-vitals-feedback.

Feedback

Hai trovato utile questa pagina?